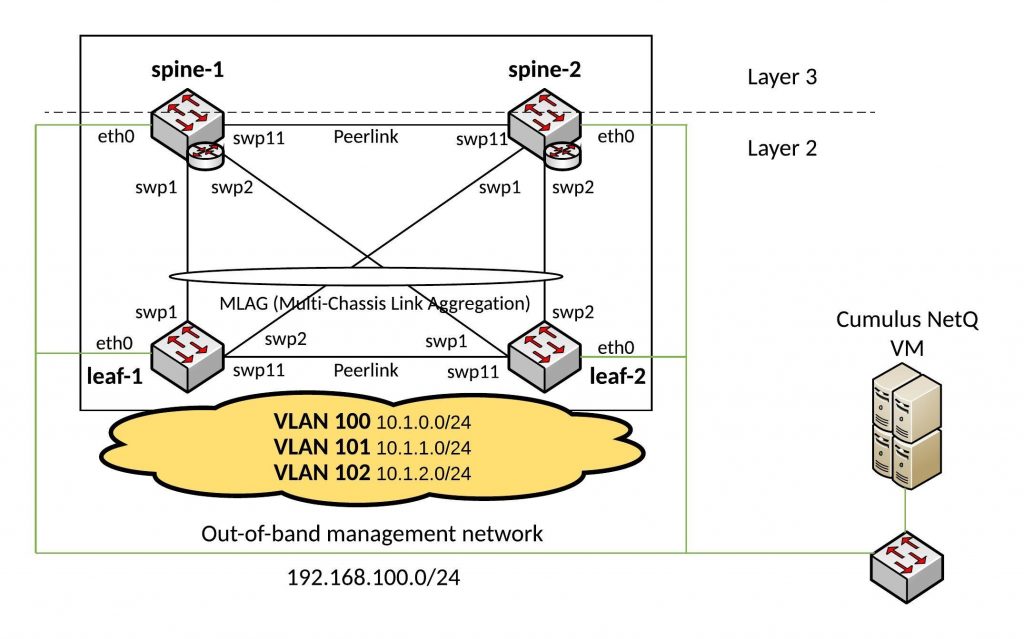

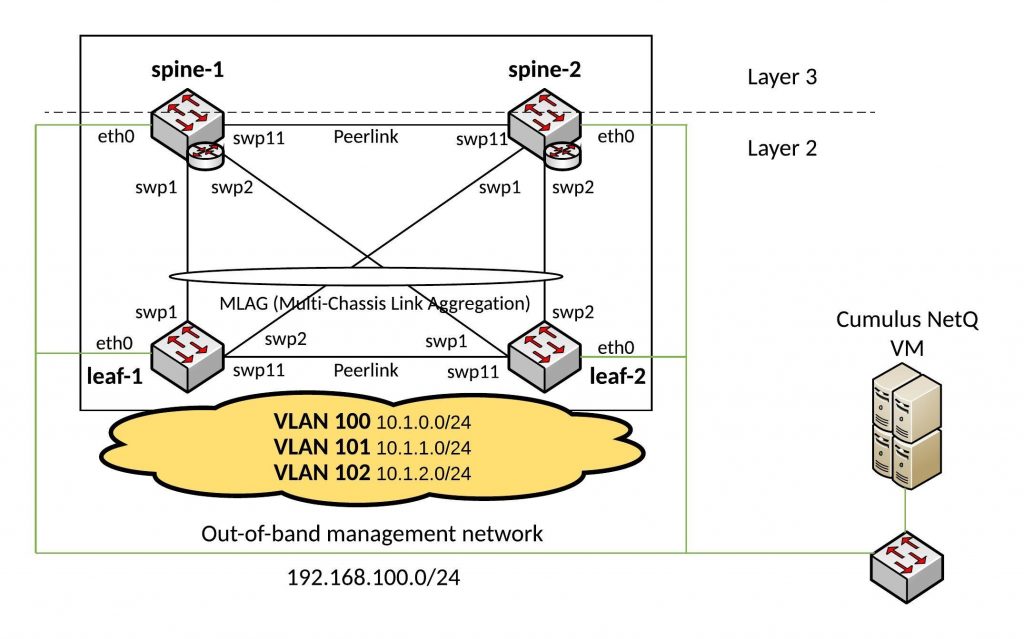

I had some time to play around with the new NetQ tool from Cumulus which checks your Cumulus Linux switch fabric.

I did some testing with my Cumulus Layer 2 Fabric example: Ansible Playbook for Cumulus Linux (Layer 2 Fabric)

You need to download the NetQ VM from Cumulus as VMware or VirtualBox template: here

It is a great tool to centrally check your Cumulus switches and keep history about changes in your environment. NetQ can send out notification about changes in your fabric which is nice because you are always up-to-date what is going on in your network.

Installing NetQ agent on a Cumulus Linux Switch:

cumulus@spine-1:~$ sudo apt-get update

cumulus@spine-1:~$ sudo apt-get install cumulus-netq -y

Configuring the NetQ Agent on a switch:

cumulus@spine-1:~$ sudo systemctl restart rsyslog

cumulus@spine-1:~$ netq add server 192.168.100.133

cumulus@spine-1:~$ netq agent restart

I will write a small Ansible script in the next days to automate the agent installation and configuration.

Connect to Cumulus NetQ VM and check agent connectivity

admin@cumulus:~$ netq-shell

Welcome to Cumulus (R) NetQ Command Line Interface

TIP: Type `netq help` to get started.

netq@dc9163c7044e:/$ netq show agents

Node Status Sys Uptime Agent Uptime

------- -------- ------------ --------------

leaf-1 Fresh 1h ago 1h ago

leaf-2 Fresh 1h ago 1h ago

spine-1 Fresh 1h ago 1h ago

spine-2 Fresh 1h ago 1h ago

netq@dc9163c7044e:/$

Basic Show Commands:

netq@dc9163c7044e:/$ netq show clag

Matching CLAG session records are:

Node Peer SysMac State Backup #Links #Dual Last Changed

---------------- ---------------- ----------------- ----- ------ ------ ----- --------------

leaf-1 leaf-2(P) 44:38:39:ff:40:93 up up 1 1 8m ago

leaf-2(P) leaf-1 44:38:39:ff:40:93 up up 1 1 8m ago

spine-1(P) spine-2 44:38:39:ff:40:94 up up 1 1 8m ago

spine-2 spine-1(P) 44:38:39:ff:40:94 up up 1 1 9m ago

netq@dc9163c7044e:/$

netq@dc9163c7044e:/$ netq show lldp

LLDP peer info for *:*

Node Interface LLDP Peer Peer Int Last Changed

------- ----------- ----------- ---------- --------------

leaf-1 eth0 cumulus eth0 1h ago

leaf-1 eth0 leaf-2 eth0 1h ago

leaf-1 eth0 spine-1 eth0 1h ago

leaf-1 eth0 spine-2 eth0 1h ago

leaf-1 swp1 spine-1 swp1 1h ago

leaf-1 swp11 leaf-2 swp11 9m ago

leaf-1 swp2 spine-2 swp1 1h ago

leaf-2 eth0 cumulus eth0 1h ago

leaf-2 eth0 leaf-1 eth0 1h ago

leaf-2 eth0 spine-1 eth0 1h ago

leaf-2 eth0 spine-2 eth0 1h ago

leaf-2 swp1 spine-2 swp2 1h ago

leaf-2 swp11 leaf-1 swp11 8m ago

leaf-2 swp2 spine-1 swp2 1h ago

spine-1 eth0 cumulus eth0 1h ago

spine-1 eth0 leaf-1 eth0 1h ago

spine-1 eth0 leaf-2 eth0 1h ago

spine-1 eth0 spine-2 eth0 1h ago

spine-1 swp1 leaf-1 swp1 1h ago

spine-1 swp11 spine-2 swp11 1h ago

spine-1 swp2 leaf-2 swp2 8m ago

spine-2 eth0 cumulus eth0 1h ago

spine-2 eth0 leaf-1 eth0 1h ago

spine-2 eth0 leaf-2 eth0 1h ago

spine-2 eth0 spine-1 eth0 1h ago

spine-2 swp1 leaf-1 swp2 1h ago

spine-2 swp11 spine-1 swp11 1h ago

spine-2 swp2 leaf-2 swp1 8m ago

netq@dc9163c7044e:/$

netq@dc9163c7044e:/$ netq show interfaces type bond

Matching interface records are:

Node Interface Type State Details Last Changed

---------------- ---------------- -------- ----- --------------------------- --------------

leaf-1 bond1 bond up Slave: swp1(spine-1:swp1), 10m ago

Slave: swp2(spine-2:swp1),

VLANs: 100-199, PVID: 1,

Master: bridge, MTU: 1500

leaf-1 peerlink bond up Slave: swp11(leaf-2:swp11), 10m ago

VLANs: , PVID: 0,

Master: peerlink, MTU: 1500

leaf-2 bond1 bond up Slave: swp1(spine-2:swp2), 10m ago

Slave: swp2(spine-1:swp2),

VLANs: 100-199, PVID: 1,

Master: bridge, MTU: 1500

leaf-2 peerlink bond up Slave: swp11(leaf-1:swp11), 10m ago

VLANs: , PVID: 0,

Master: peerlink, MTU: 1500

spine-1 bond1 bond up Slave: swp1(leaf-1:swp1), 10m ago

Slave: swp2(leaf-2:swp2),

VLANs: 100-199, PVID: 1,

Master: bridge, MTU: 1500

spine-1 peerlink bond up Slave: swp11(spine-2:swp11) 1h ago

, VLANs: 100-199,

PVID: 1, Master: bridge,

MTU: 1500

spine-2 bond1 bond up Slave: swp1(leaf-1:swp2), 10m ago

Slave: swp2(leaf-2:swp1),

VLANs: 100-199, PVID: 1,

Master: bridge, MTU: 1500

spine-2 peerlink bond up Slave: swp11(spine-1:swp11) 1h ago

, VLANs: 100-199,

PVID: 1, Master: bridge,

MTU: 1500

netq@dc9163c7044e:/$

netq@dc9163c7044e:/$ netq show ip routes

Matching IP route records are:

Origin Table IP Node Nexthops Last Changed

------ ---------------- ---------------- ---------------- -------------------------- ----------------

1 default 169.254.1.0/30 leaf-1 peerlink.4093 11m ago

1 default 169.254.1.0/30 leaf-2 peerlink.4093 11m ago

1 default 169.254.1.0/30 spine-1 peerlink.4094 1h ago

1 default 169.254.1.0/30 spine-2 peerlink.4094 1h ago

1 default 169.254.1.1/32 leaf-1 peerlink.4093 11m ago

1 default 169.254.1.1/32 spine-1 peerlink.4094 1h ago

1 default 169.254.1.2/32 leaf-2 peerlink.4093 11m ago

1 default 169.254.1.2/32 spine-2 peerlink.4094 1h ago

1 default 192.168.100.0/24 leaf-1 eth0 1h ago

1 default 192.168.100.0/24 leaf-2 eth0 1h ago

1 default 192.168.100.0/24 spine-1 eth0 1h ago

1 default 192.168.100.0/24 spine-2 eth0 1h ago

1 default 192.168.100.205/ spine-1 eth0 1h ago

32

1 default 192.168.100.206/ spine-2 eth0 1h ago

32

1 default 192.168.100.207/ leaf-1 eth0 1h ago

32

1 default 192.168.100.208/ leaf-2 eth0 1h ago

32

0 vrf-prod 0.0.0.0/0 spine-1 Blackhole 1h ago

0 vrf-prod 0.0.0.0/0 spine-2 Blackhole 1h ago

1 vrf-prod 10.1.0.0/24 spine-1 bridge.100 1h ago

1 vrf-prod 10.1.0.0/24 spine-2 bridge.100 1h ago

1 vrf-prod 10.1.0.252/32 spine-1 bridge.100 1h ago

1 vrf-prod 10.1.0.253/32 spine-2 bridge.100 1h ago

1 vrf-prod 10.1.0.254/32 spine-1 bridge.100 1h ago

1 vrf-prod 10.1.0.254/32 spine-2 bridge.100 1h ago

1 vrf-prod 10.1.1.0/24 spine-1 bridge.101 1h ago

1 vrf-prod 10.1.1.0/24 spine-2 bridge.101 1h ago

1 vrf-prod 10.1.1.252/32 spine-1 bridge.101 1h ago

1 vrf-prod 10.1.1.253/32 spine-2 bridge.101 1h ago

1 vrf-prod 10.1.1.254/32 spine-1 bridge.101 1h ago

1 vrf-prod 10.1.1.254/32 spine-2 bridge.101 1h ago

1 vrf-prod 10.1.2.0/24 spine-1 bridge.102 1h ago

1 vrf-prod 10.1.2.0/24 spine-2 bridge.102 1h ago

1 vrf-prod 10.1.2.252/32 spine-1 bridge.102 1h ago

1 vrf-prod 10.1.2.253/32 spine-2 bridge.102 1h ago

1 vrf-prod 10.1.2.254/32 spine-1 bridge.102 1h ago

1 vrf-prod 10.1.2.254/32 spine-2 bridge.102 1h ago

netq@dc9163c7044e:/$

See Changes in Switch Fabric:

netq@dc9163c7044e:/$ netq leaf-1 show interfaces type bond

Matching interface records are:

Node Interface Type State Details Last Changed

---------------- ---------------- -------- ----- --------------------------- --------------

leaf-1 bond1 bond up Slave: swp1(spine-1:swp1), 2s ago

Slave: swp2(spine-2:swp1),

VLANs: 100-199, PVID: 1,

Master: bridge, MTU: 1500

leaf-1 peerlink bond up Slave: swp11(leaf-2:swp11), 21m ago

VLANs: , PVID: 0,

Master: peerlink, MTU: 1500

netq@dc9163c7044e:/$

cumulus@leaf-1:~$ sudo ifdown bond1

cumulus@leaf-1:~$

netq@dc9163c7044e:/$ netq leaf-1 show interfaces type bond

Matching interface records are:

Node Interface Type State Details Last Changed

---------------- ---------------- -------- ----- --------------------------- --------------

leaf-1 peerlink bond up Slave: swp11(leaf-2:swp11), 22m ago

VLANs: , PVID: 0,

Master: peerlink, MTU: 1500

netq@dc9163c7044e:/$

netq@dc9163c7044e:/$ netq leaf-1 show interfaces type bond changes

Matching interface records are:

Node Interface Type State Details DbState Last Changed

---------------- ---------------- -------- ----- --------------------------- ------- --------------

leaf-1 bond1 bond down VLANs: , PVID: 0, Del 21s ago

Master: bridge, MTU: 1500

leaf-1 bond1 bond down Slave: swp1(), Add 21s ago

Slave: swp2(),

VLANs: 100-199, PVID: 1,

Master: bridge, MTU: 1500

leaf-1 bond1 bond up Slave: swp1(spine-1:swp1), Add 1m ago

Slave: swp2(spine-2:swp1),

VLANs: 100-199, PVID: 1,

Master: bridge, MTU: 1500

More information you can find in the Cumulus NetQ documentation: https://docs.cumulusnetworks.com/display/NETQ/NetQ