Using GitOps way of working and tools like the GitOps toolkit (Flux CD v2) is great for applying configuration to your Kubernetes clusters but what about secrets and how can you store them securely in your repository? The perfect tool for this is Mozilla’s SOPS which uses a cloud based KMS, HashiCorp Vault or a PGP key to encrypt and decrypt your secrets and store them in encrypted form with the rest of your configuration in a code repostory. There is a guide in the Flux documentation about how to use SOPS but I did this slightly differently with a Google Cloud KMS.

Start by downloading the latest version of the Mozilla SOPS command-line binary. This is what makes SOPS so easy to use, there is not much you need to encrypt or decrypt secrets apart for an KMS system or a simple PGP key.

sudo wget -O /usr/local/bin/sops https://github.com/mozilla/sops/releases/download/v3.7.1/sops-v3.7.1.linux sudo chmod 755 /usr/local/bin/sops

Next create the Google Cloud KMS, which I am using in my example.

$ gcloud auth application-default login

Go to the following link in your browser:

https://accounts.google.com/o/oauth2/auth?code_challenge=xxxxxxx&prompt=select_account&code_challenge_method=S256&access_type=offline&redirect_uri=urn%3Aietf%3Awg%3Aoauth%3A2.0%3Aoob&response_type=code&client_id=xxxxxxxxx-xxxxxxxxxxxxxxxxxx.apps.googleusercontent.com&scope=https%3A%2F%2Fwww.googleapis.com%2Fauth%2Fuserinfo.email+https%3A%2F%2Fwww.googleapis.com%2Fauth%2Fcloud-platform+https%3A%2F%2Fwww.googleapis.com%2Fauth%2Faccounts.reauth

Enter verification code: xxxxxxxxxxxxxxx

Credentials saved to file: [/home/ubuntu/.config/gcloud/application_default_credentials.json]

These credentials will be used by any library that requests Application Default Credentials (ADC).

$ gcloud kms keyrings create sops --location global

$ gcloud kms keys create sops-key --location global --keyring sops --purpose encryption

$ gcloud kms keys list --location global --keyring sops

NAME PURPOSE ALGORITHM PROTECTION_LEVEL LABELS PRIMARY_ID PRIMARY_STATE

projects/kubernetes-xxxxxx/locations/global/keyRings/sops/cryptoKeys/sops-key ENCRYPT_DECRYPT GOOGLE_SYMMETRIC_ENCRYPTION SOFTWARE 1 ENABLED

To encrypt secrets you need to create a .sops.yaml file in root your code repository.

creation_rules:

- path_regex: \.yaml$

gcp_kms: projects/kubernetes-xxxxxx/locations/global/keyRings/sops/cryptoKeys/sops-key

encrypted_regex: ^(data|stringData)$

Let’s create a simple Kubernetes secret for testing.

$ cat secret.yaml --- apiVersion: v1 kind: Secret metadata: name: mysecret namespace: default type: Opaque data: username: YWRtaW4= password: MWYyZDFlMmU2N2Rm

Encrypt your secret.yaml using SOPS with the following example.

$ sops -e secret.yaml

apiVersion: v1

kind: Secret

metadata:

name: mysecret

namespace: default

type: Opaque

data:

username: ENC[AES256_GCM,data:<-HASH->,type:str]

password: ENC[AES256_GCM,data:<-HASH->,type:str]

sops:

kms: []

gcp_kms:

- resource_id: projects/kubernetes-xxxxxx/locations/global/keyRings/sops/cryptoKeys/sops-key

created_at: '2021-03-01T17:25:29Z'

enc: <-HASH->

azure_kv: []

lastmodified: '2021-03-01T17:25:29Z'

mac: ENC[AES256_GCM,data:<-HASH->,type:str]

pgp: []

encrypted_regex: ^(data|stringData)$

version: 3.5.0

Alternatively you can encrypt and replace the file in-place.

$ sops -i -e secret.yaml

To decrypt the yaml file use sops -d or replace in-place using sops -i -d.

$ sops -d secret.yaml

apiVersion: v1

kind: Secret

metadata:

name: mysecret

namespace: default

type: Opaque

data:

username: YWRtaW4=

password: MWYyZDFlMmU2N2Rm

You can also edit an encrypted file with the default terminal editor by directly using the sops command without any options.

$ sops secret.yaml File has not changed, exiting.

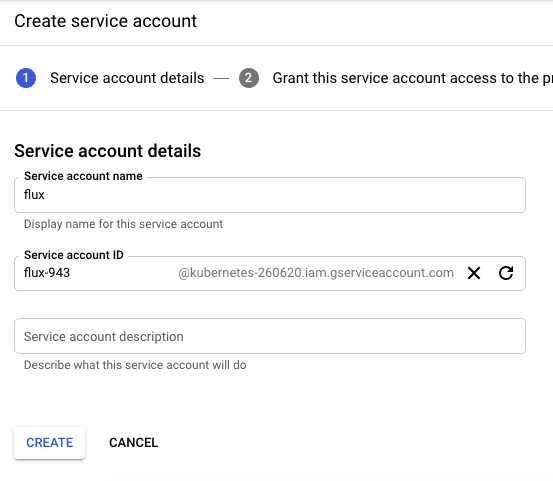

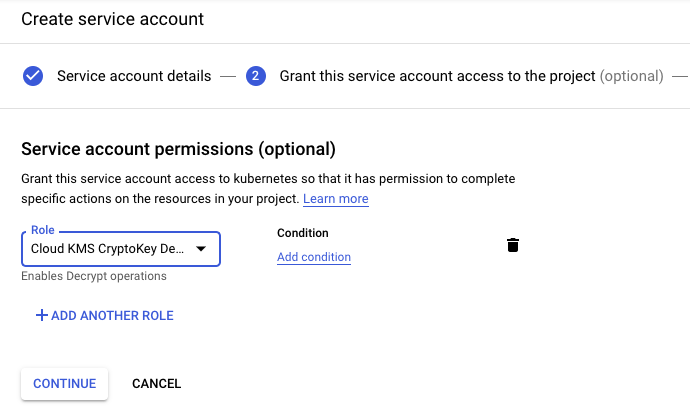

Let’s use the Flux CD Kustomize controller for this to decrypt Kubernetes secrets and apply to the specified namespace. First you need to create a GCP service account for Flux and grant the permission to decrypt.

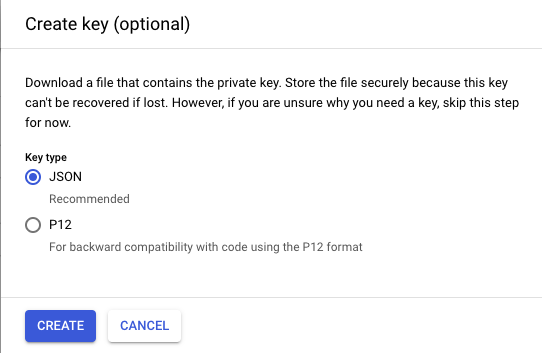

Download the GCP json authentication file for the service account and create a new secret in the Flux namespace.

$ kubectl create secret generic gcp-auth -n gotk-system --from-file=./sops-gcp $ kubectl get secrets -n gotk-system gcp-auth -o yaml apiVersion: v1 data: sops-gcp: <-BASE64-ENCODED-GCP-AUTH-JSON-> kind: Secret metadata: creationTimestamp: "2021-03-01T17:34:11Z" name: gcp-auth namespace: gotk-system resourceVersion: "1879000" selfLink: /api/v1/namespaces/gotk-system/secrets/gcp-auth uid: 10a14c1f-19a6-41a2-8610-694b12efefee type: Opaque

You need to update the kustomize-controller deployment and add the volume mount for the sops GCP secret and the environment variable with the value where to find the Google application credential file. This is where my example is different to what is documented because I am not using integrated cloud authentication because my cluster is running locally.

...

spec:

containers:

...

- env:

- name: GOOGLE_APPLICATION_CREDENTIALS

value: /tmp/.gcp/credentials/sops-gcp

name: manager

volumeMounts:

- mountPath: /tmp/.gcp/credentials

name: sops-gcp

readOnly: true

volumes:

- name: sops-gcp

secret:

defaultMode: 420

secretName: sops-gcp

...

In the Kustomize object you enable the sops decryption provider and the controller automatically decrypts and applies secrets in the next reconcile loop.

apiVersion: kustomize.toolkit.fluxcd.io/v1beta1

kind: Kustomization

metadata:

name: cluster

namespace: gotk-system

spec:

decryption:

provider: sops

interval: 5m0s

path: ./clusters/cluster-dev

prune: true

sourceRef:

kind: GitRepository

name: github-source

This takes a few minutes until the sync is completed and we can find out if the example secret got created correctly.

$ kubectl get secrets mysecret -n default -o yaml apiVersion: v1 data: password: MWYyZDFlMmU2N2Rm username: YWRtaW4= kind: Secret metadata: name: mysecret namespace: default resourceVersion: "3439293" selfLink: /api/v1/namespaces/default/secrets/mysecret uid: 4a009675-3c89-448b-bb86-6211cec3d4ea type: Opaque

This is how to use SOPS and Flux CD v2 to decrypt and apply Kubernetes secrets using GitOps.