I am currently looking into different possibilities for Open Source alternatives to commercial routers from Cisco or Juniper to use in Amazon AWS Transit VPCs. One option is to completely build the software router by myself with a Debian Linux, FRR (Free Range Routing) and StrongSwan, read my post about the self-build software router: Open Source Routing GRE over IPSec with StrongSwan and Cisco IOS-XE

A few years back I was working with Juniper JunOS routers and I thought I’d give VyOS a try because the command line which is very similar.

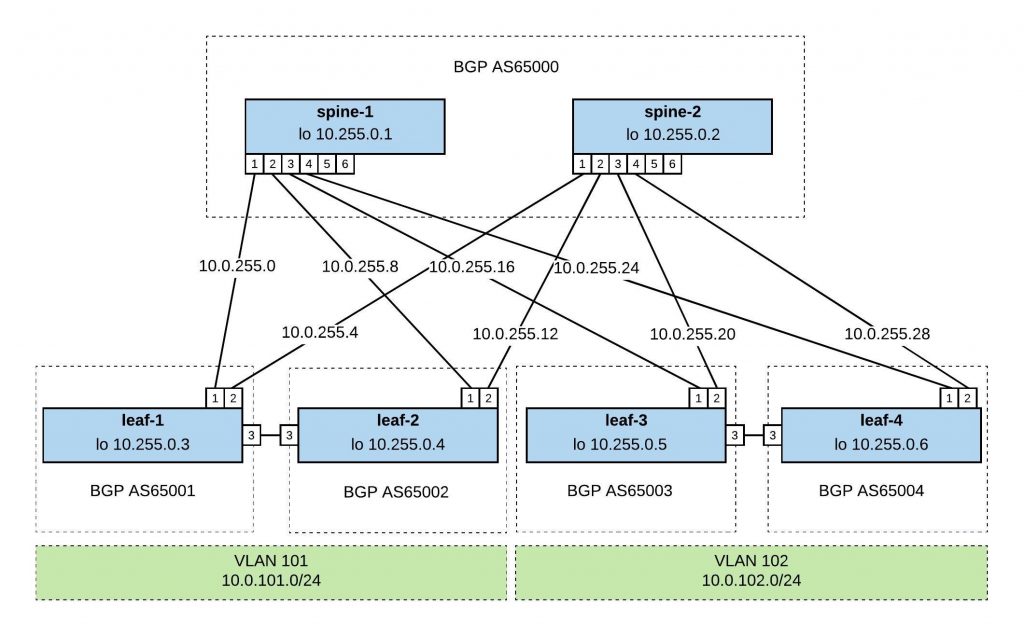

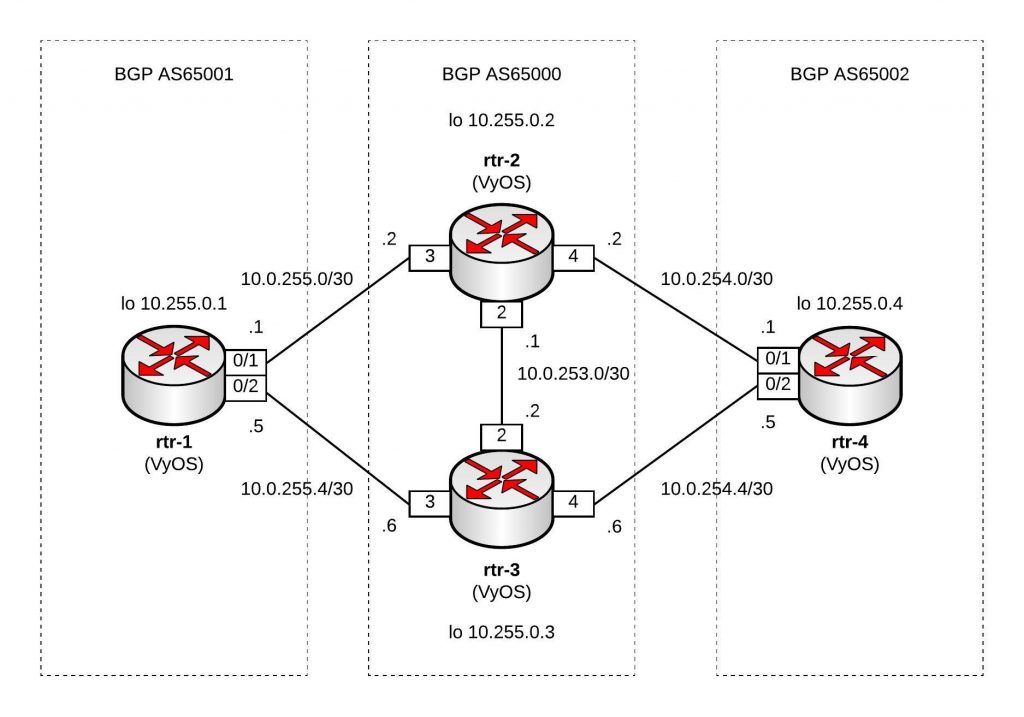

I replicated the same Vagrant topology for my Ansible Playbook for Cisco BGP Routing Topology but used VyOS instead of Cisco.

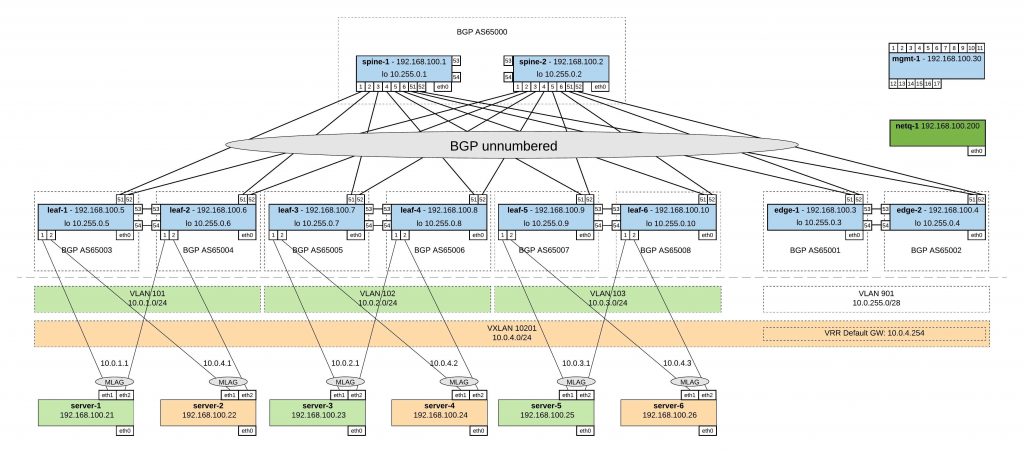

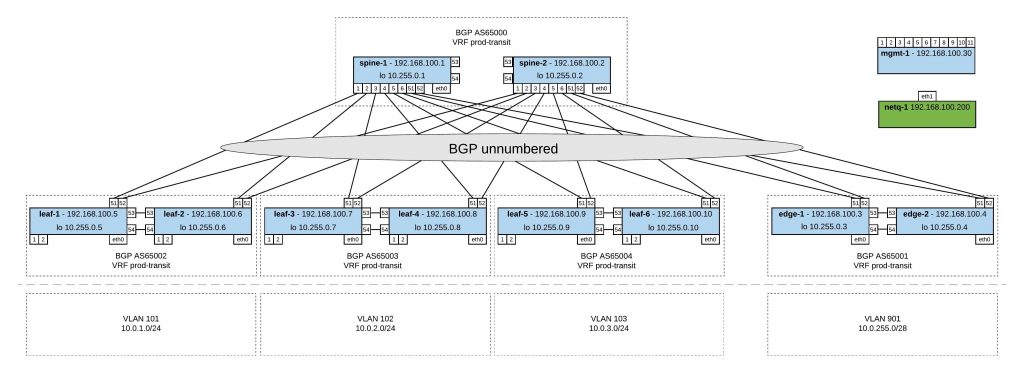

Network overview:

Here are the repositories for the Vagrant topology https://github.com/berndonline/vyos-lab-vagrant and the Ansible Playbook https://github.com/berndonline/vyos-lab-provision.

The Ansible Playbook site.yml is very simple, using the Ansible vyos_system for changing the hostname and the module vyos_config for interface and routing configuration:

---

- hosts: all

connection: local

user: '{{ ansible_ssh_user }}'

gather_facts: 'no'

roles:

- hostname

- interfaces

- routing

Here is an example from host_vars rtr-1.yml:

---

hostname: rtr-1

domain_name: lab.local

loopback:

dum0:

alias: dummy loopback0

address: 10.255.0.1

mask: /32

interfaces:

eth1:

alias: connection rtr-2

address: 10.0.255.1

mask: /30

eth2:

alias: connection rtr-3

address: 10.0.255.5

mask: /30

bgp:

asn: 65001

neighbor:

- {address: 10.0.255.2, remote_as: 65000}

- {address: 10.0.255.6, remote_as: 65000}

networks:

- {network: 10.0.255.0, mask: /30}

- {network: 10.0.255.4, mask: /30}

- {network: 10.255.0.1, mask: /32}

maxpath: 2

The template interfaces.j2 for the interface configuration:

{% if loopback is defined %}

{% for port, value in loopback.items() %}

set interfaces dummy {{ port }} address '{{ value.address }}{{ value.mask }}'

set interfaces dummy {{ port }} description '{{ value.alias }}'

{% endfor %}

{% endif %}

{% if interfaces is defined %}

{% for port, value in interfaces.items() %}

set interfaces ethernet {{ port }} address '{{ value.address }}{{ value.mask }}'

set interfaces ethernet {{ port }} description '{{ value.alias }}'

{% endfor %}

{% endif %}

This is the template routing.j2 for the routing configuration:

{% if bgp is defined %}

{% if bgp.maxpath is defined %}

set protocols bgp {{ bgp.asn }} maximum-paths ebgp '{{ bgp.maxpath }}'

{% endif %}

{% for item in bgp.neighbor %}

set protocols bgp {{ bgp.asn }} neighbor {{ item.address }} ebgp-multihop '2'

set protocols bgp {{ bgp.asn }} neighbor {{ item.address }} remote-as '{{ item.remote_as }}'

{% endfor %}

{% for item in bgp.networks %}

set protocols bgp {{ bgp.asn }} network '{{ item.network }}{{ item.mask }}'

{% endfor %}

set protocols bgp {{ bgp.asn }} parameters router-id '{{ loopback.dum0.address }}'

{% endif %}

The output of the running Ansible Playbook:

PLAY [all] ********************************************************************* TASK [hostname : write hostname and domain-name] ******************************* changed: [rtr-3] changed: [rtr-2] changed: [rtr-4] changed: [rtr-1] TASK [interfaces : write interfaces config] ************************************ changed: [rtr-4] changed: [rtr-1] changed: [rtr-3] changed: [rtr-2] TASK [routing : write routing config] ****************************************** changed: [rtr-2] changed: [rtr-4] changed: [rtr-3] changed: [rtr-1] PLAY RECAP ********************************************************************* rtr-1 : ok=3 changed=3 unreachable=0 failed=0 rtr-2 : ok=3 changed=3 unreachable=0 failed=0 rtr-3 : ok=3 changed=3 unreachable=0 failed=0 rtr-4 : ok=3 changed=3 unreachable=0 failed=0

Like in all my other Ansible Playbooks I use some kind of validation, a simple ping check vyos_check_icmp.yml to see if the configuration is correctly deployed:

---

- hosts: all

connection: local

user: '{{ ansible_ssh_user }}'

gather_facts: 'no'

tasks:

- name: validate connection from rtr-1

vyos_command:

commands: 'ping {{ item }} count 4'

when: "'rtr-1' in inventory_hostname"

with_items:

- '10.0.255.2'

- '10.0.255.6'

- name: validate connection from rtr-2

vyos_command:

commands: 'ping {{ item }} count 4'

when: "'rtr-2' in inventory_hostname"

with_items:

- '10.0.255.1'

- '10.0.254.1'

- '10.0.253.2'

...

The output of the icmp validation Playbook:

PLAY [all] ********************************************************************* TASK [validate connection from rtr-1] ****************************************** skipping: [rtr-3] => (item=10.0.255.2) skipping: [rtr-3] => (item=10.0.255.6) skipping: [rtr-2] => (item=10.0.255.2) skipping: [rtr-2] => (item=10.0.255.6) skipping: [rtr-4] => (item=10.0.255.2) skipping: [rtr-4] => (item=10.0.255.6) ok: [rtr-1] => (item=10.0.255.2) ok: [rtr-1] => (item=10.0.255.6) TASK [validate connection from rtr-2] ****************************************** skipping: [rtr-3] => (item=10.0.255.1) skipping: [rtr-3] => (item=10.0.254.1) skipping: [rtr-1] => (item=10.0.255.1) skipping: [rtr-3] => (item=10.0.253.2) skipping: [rtr-1] => (item=10.0.254.1) skipping: [rtr-1] => (item=10.0.253.2) skipping: [rtr-4] => (item=10.0.255.1) skipping: [rtr-4] => (item=10.0.254.1) skipping: [rtr-4] => (item=10.0.253.2) ok: [rtr-2] => (item=10.0.255.1) ok: [rtr-2] => (item=10.0.254.1) ok: [rtr-2] => (item=10.0.253.2) TASK [validate connection from rtr-3] ****************************************** skipping: [rtr-1] => (item=10.0.255.5) skipping: [rtr-1] => (item=10.0.254.5) skipping: [rtr-2] => (item=10.0.255.5) skipping: [rtr-1] => (item=10.0.253.1) skipping: [rtr-2] => (item=10.0.254.5) skipping: [rtr-2] => (item=10.0.253.1) skipping: [rtr-4] => (item=10.0.255.5) skipping: [rtr-4] => (item=10.0.254.5) skipping: [rtr-4] => (item=10.0.253.1) ok: [rtr-3] => (item=10.0.255.5) ok: [rtr-3] => (item=10.0.254.5) ok: [rtr-3] => (item=10.0.253.1) TASK [validate connection from rtr-4] ****************************************** skipping: [rtr-3] => (item=10.0.254.2) skipping: [rtr-3] => (item=10.0.254.6) skipping: [rtr-1] => (item=10.0.254.2) skipping: [rtr-1] => (item=10.0.254.6) skipping: [rtr-2] => (item=10.0.254.2) skipping: [rtr-2] => (item=10.0.254.6) ok: [rtr-4] => (item=10.0.254.2) ok: [rtr-4] => (item=10.0.254.6) TASK [validate bgp connection from rtr-1] ************************************** skipping: [rtr-3] => (item=10.255.0.4) skipping: [rtr-2] => (item=10.255.0.4) skipping: [rtr-4] => (item=10.255.0.4) ok: [rtr-1] => (item=10.255.0.4) TASK [validate bgp connection from rtr-4] ************************************** skipping: [rtr-3] => (item=10.255.0.1) skipping: [rtr-1] => (item=10.255.0.1) skipping: [rtr-2] => (item=10.255.0.1) ok: [rtr-4] => (item=10.255.0.1) PLAY RECAP ********************************************************************* rtr-1 : ok=2 changed=0 unreachable=0 failed=0 rtr-2 : ok=1 changed=0 unreachable=0 failed=0 rtr-3 : ok=1 changed=0 unreachable=0 failed=0 rtr-4 : ok=2 changed=0 unreachable=0 failed=0

As you see, the configuration is successfully deployed and BGP connectivity between the nodes.