More about Ansible network automation with Cisco ASAv and continuous integration testing like in my previous posts using Vagrant and Gitlab-CI.

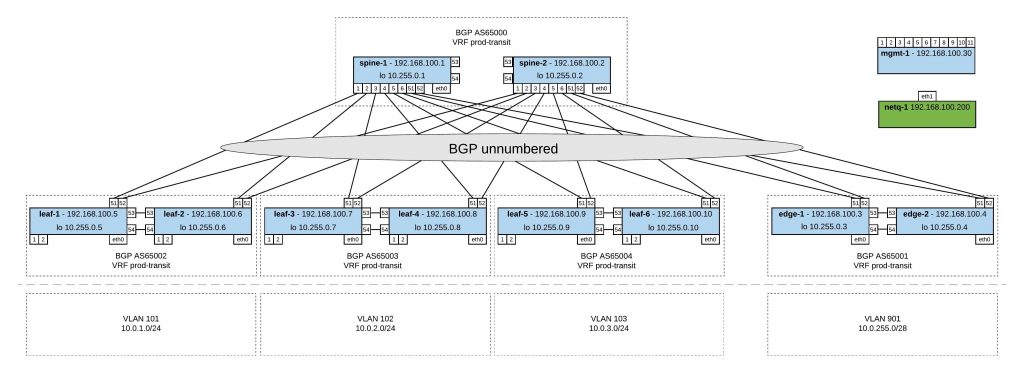

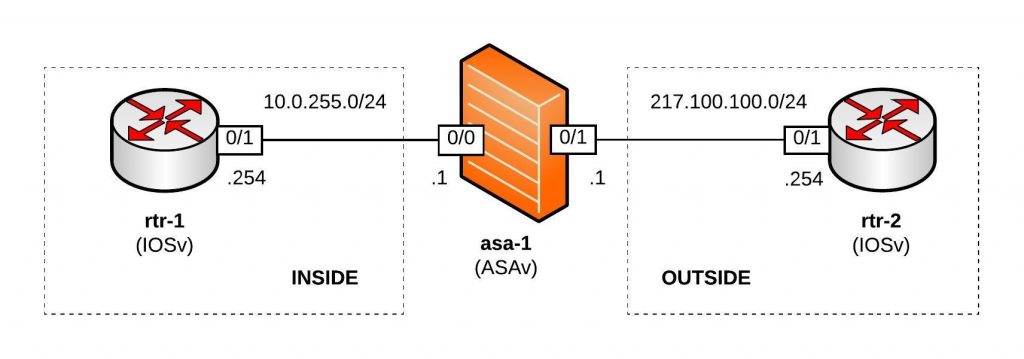

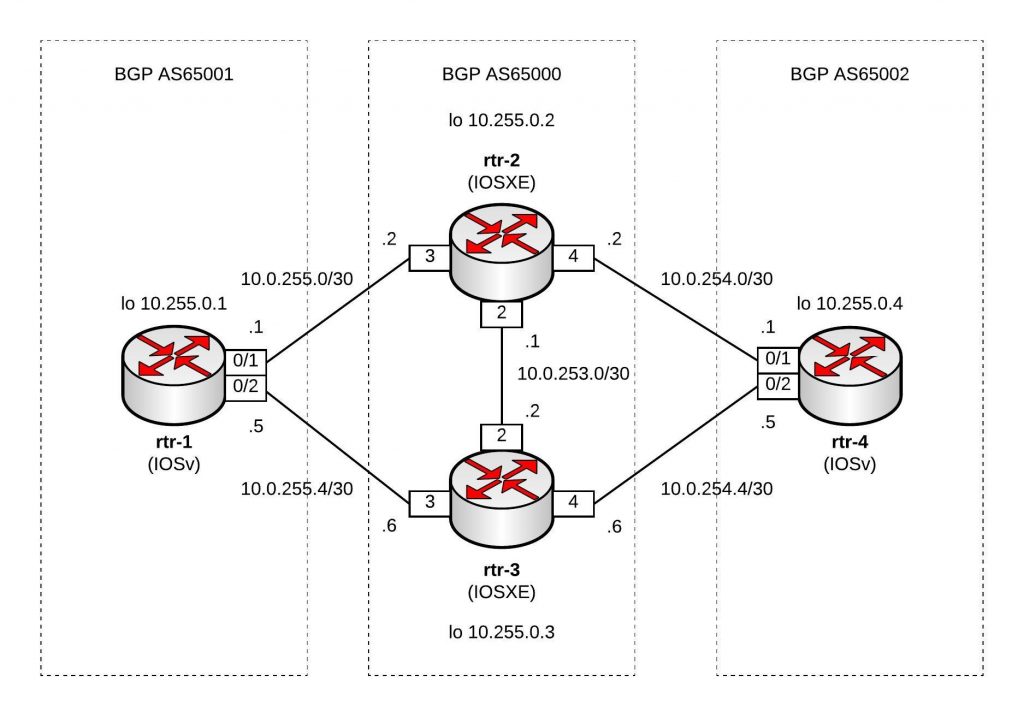

Network overview:

Here’s my Github repository where you can find the complete Ansible Playbook: https://github.com/berndonline/asa-lab-provision

Automating firewall configuration is not that easy and can get very complicated because you have different objects, access-lists and service policies to configure which all together makes the playbook complex rather than simple.

What you won’t find in my playbook is how to automate the cluster deployment because this wasn’t possible in my scenario using ASAv and Vagrant. I didn’t have physical Cisco ASA firewall on hand to do this but I might add this later in the coming months.

Let’s look at the different variable files I created; first the host_vars for asa-1.yml which is very similar to a Cisco router:

---

hostname: asa-1

domain_name: lab.local

interfaces:

0/0:

alias: connection rtr-1 inside

nameif: inside

security_level: 100

address: 10.0.255.1

mask: 255.255.255.0

0/1:

alias: connection rtr-2 outside

nameif: outside

security_level: 0

address: 217.100.100.1

mask: 255.255.255.0

routes:

- route outside 0.0.0.0 0.0.0.0 217.100.100.254 1

I then use multiple files in group_vars for objects.yml, object-groups.yml, access-lists.yml and nat.yml to configure specific firewall settings.

Roles:

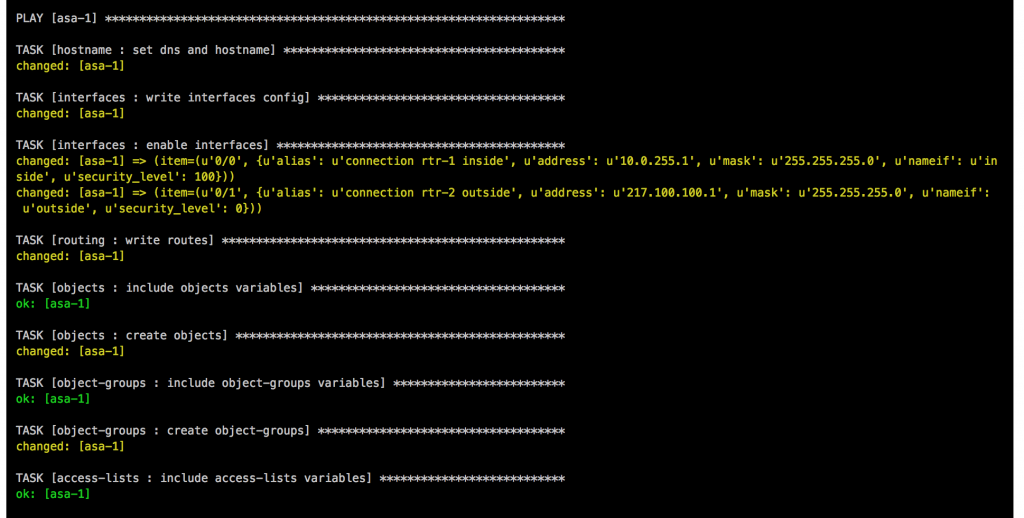

- Hostname: The task in main.yml uses the Ansible module asa_config and configures hostname and domain name.

- Interfaces: This role uses the Ansible module asa_config to deploy the template interfaces.j2 to configure the interfaces. In the main.yml is a second task to enable the interfaces when the previous template applied the configuration.

- Routing: Similar to the interfaces role and uses also the asa_config module to deploy the template routing.j2 for the static routes

- Objects: The first task in main.yml loads the objects.yml from group_vars, the second task deploys the template objects.j2.

- Object-Groups: Uses same tasks in main.yml and template object-groups.j2 like the objects role but the commands are slightly different.

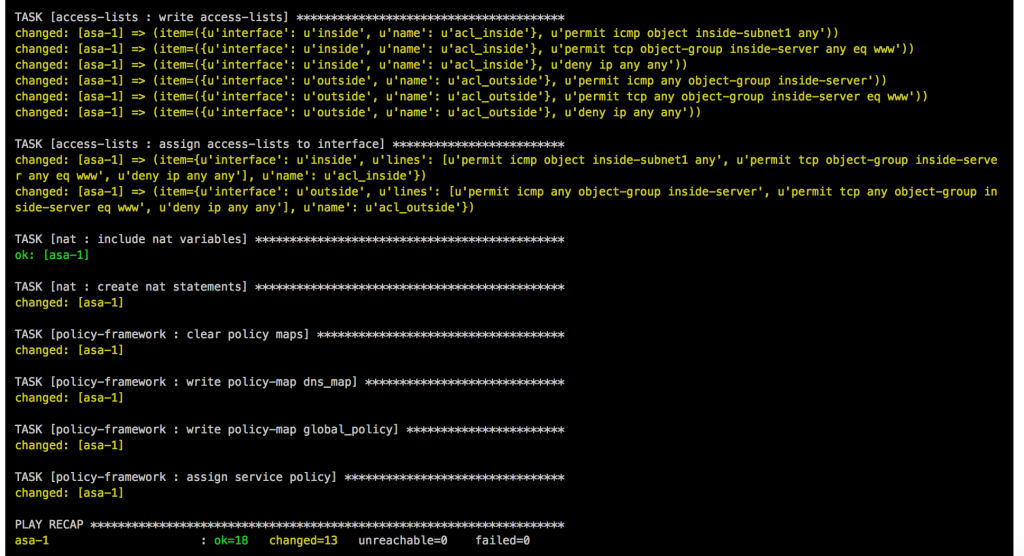

- Access-Lists: One of the more complicated roles I needed to work on, in the main.yml are multiple tasks to load variables like in the previous roles, then runs a task to clear access-lists if the variable “override_acl” from access-lists.yml group_vars is set to “true” otherwise it skips the next tasks. When the variable are set to true and the access-lists are cleared it then writes new access-lists using the Ansible module asa_acl and finishes with a task to assigning the newly created access-lists to the interfaces.

- NAT: This role is again similar to the objects role using a task main.yml to load variable file and deploys the template nat.j2. The NAT role uses object nat and only works if you created the object before in the objects group_vars.

- Policy-Framework: Multiple tasks in main.yml first clears global policy and policy maps and afterwards recreates them. Similar approach like the access lists to keep it consistent.

Main Ansible Playbook site.yml

---

- hosts: asa-1

connection: local

user: vagrant

gather_facts: 'no'

roles:

- hostname

- interfaces

- routing

- objects

- object-groups

- access-lists

- nat

- policy-framework

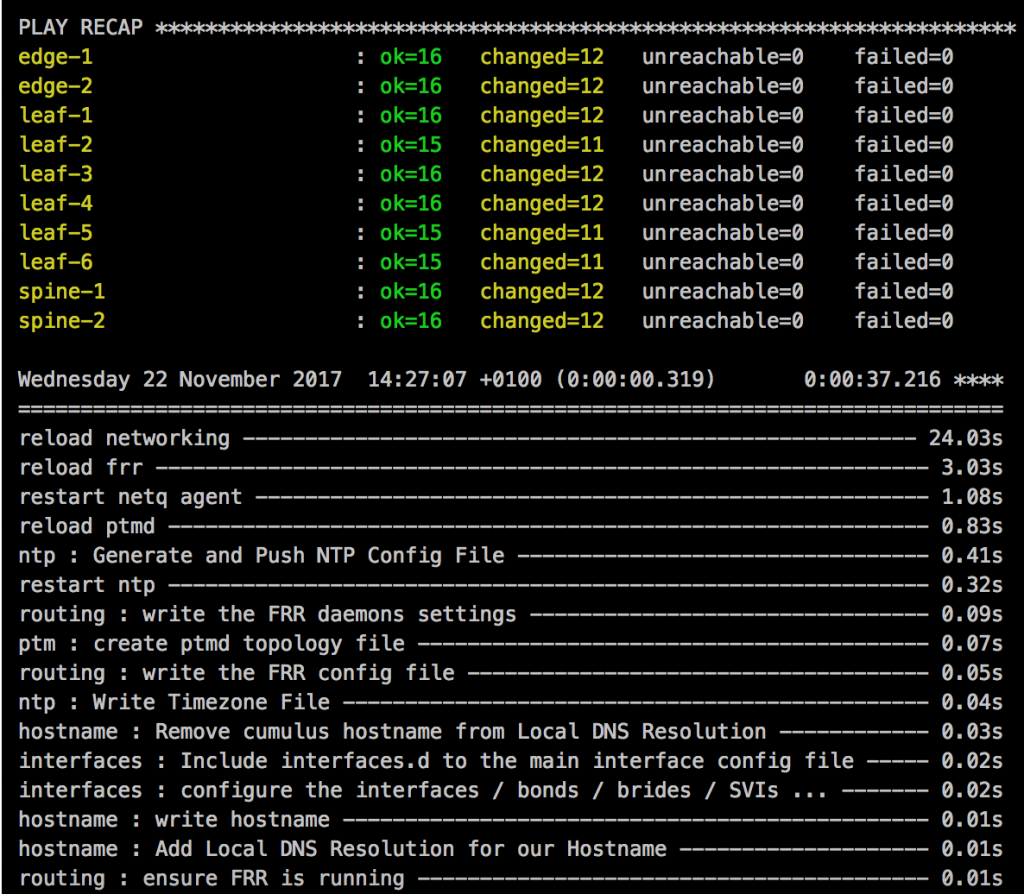

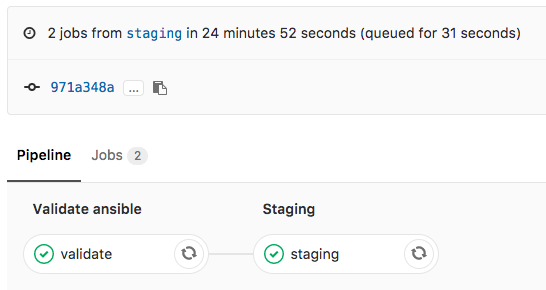

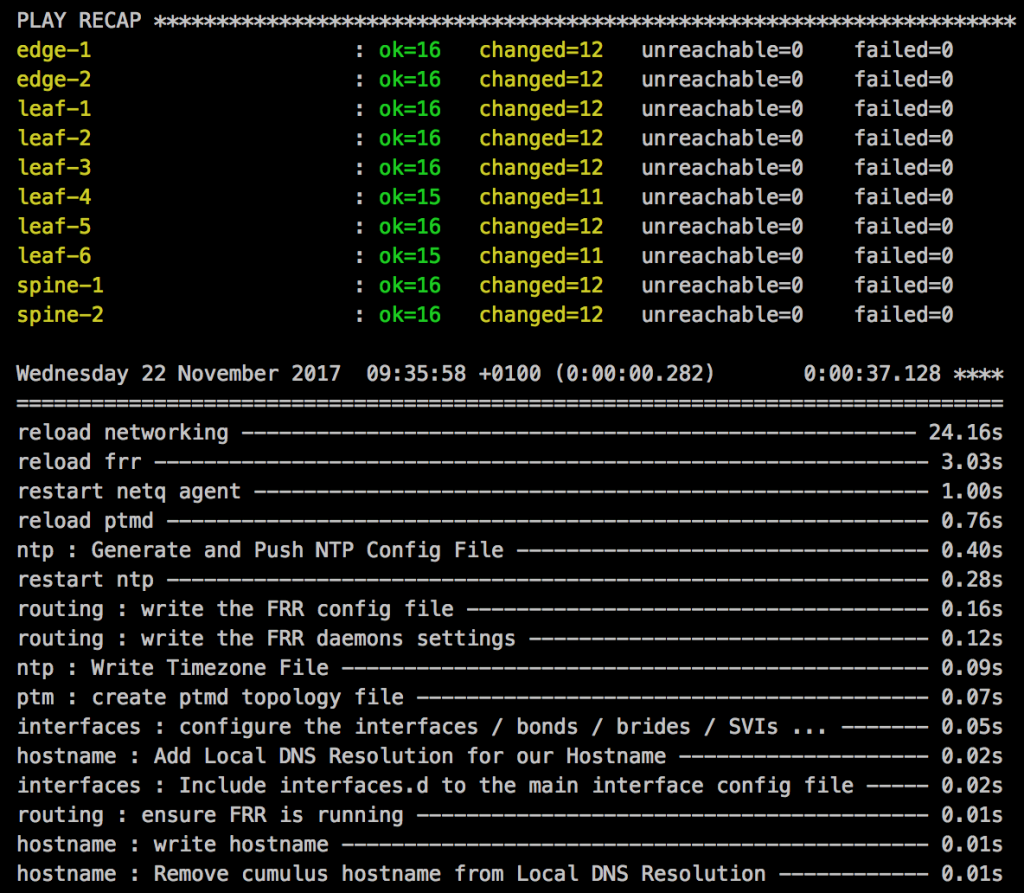

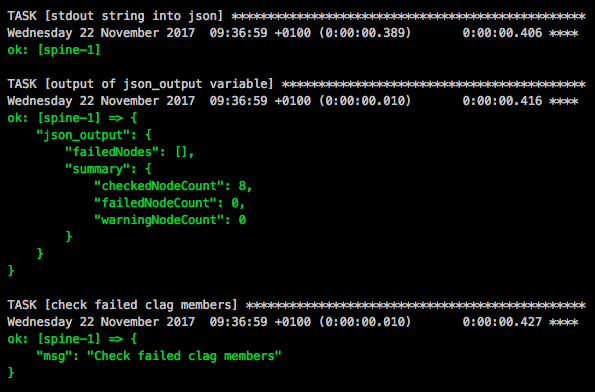

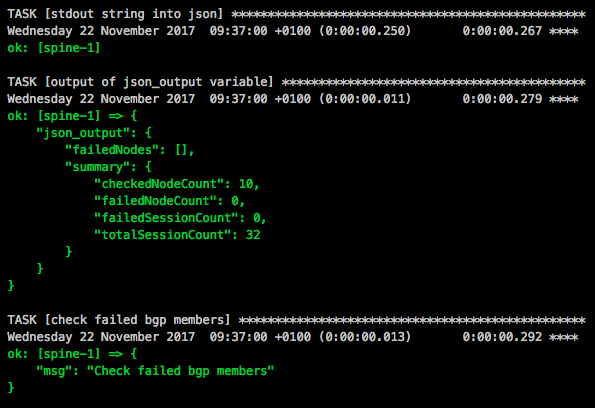

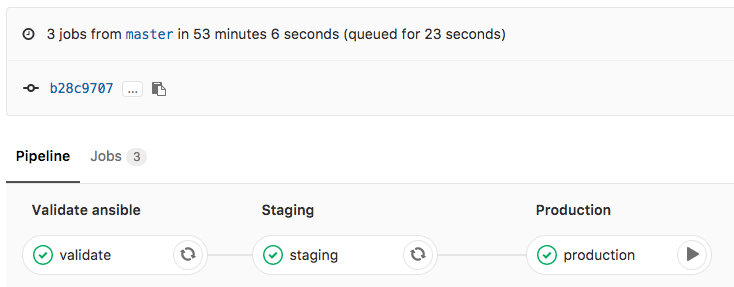

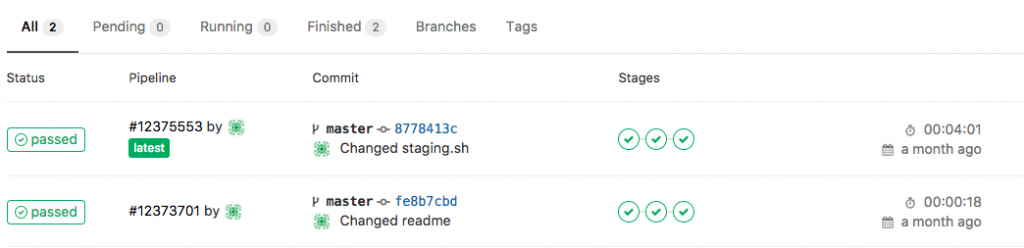

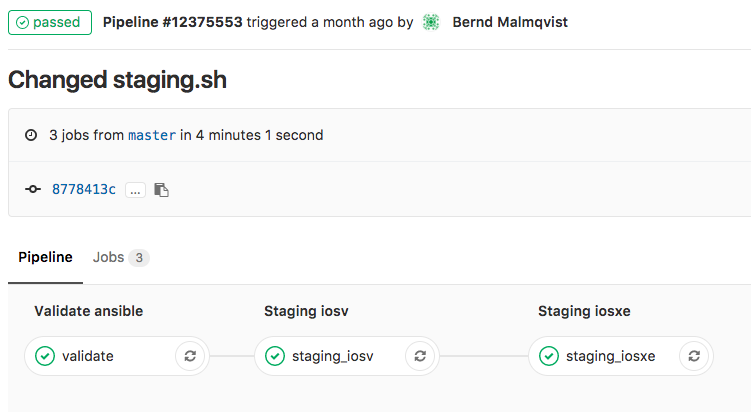

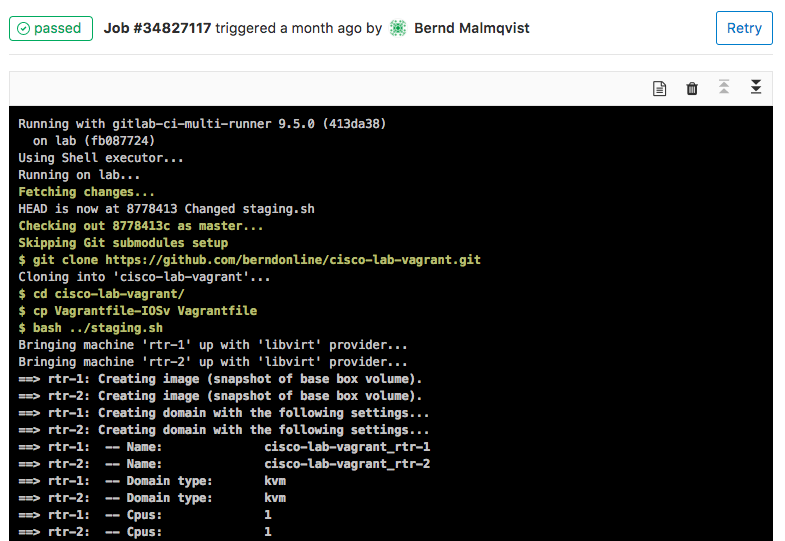

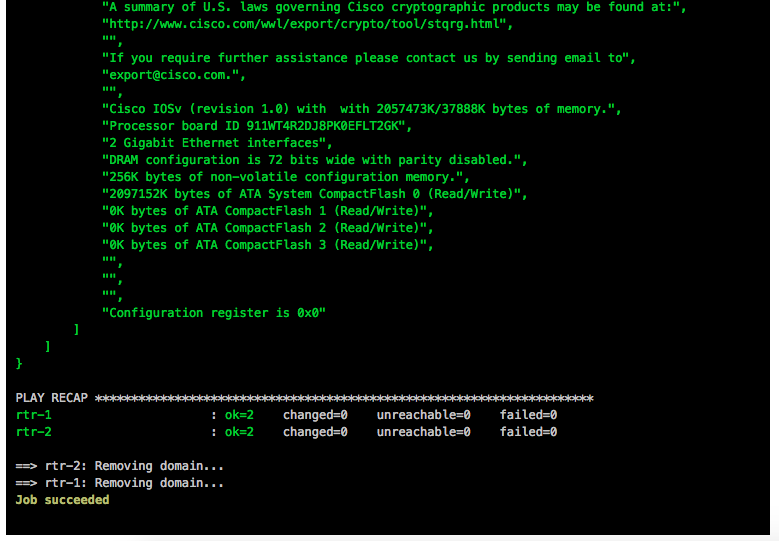

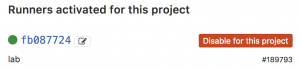

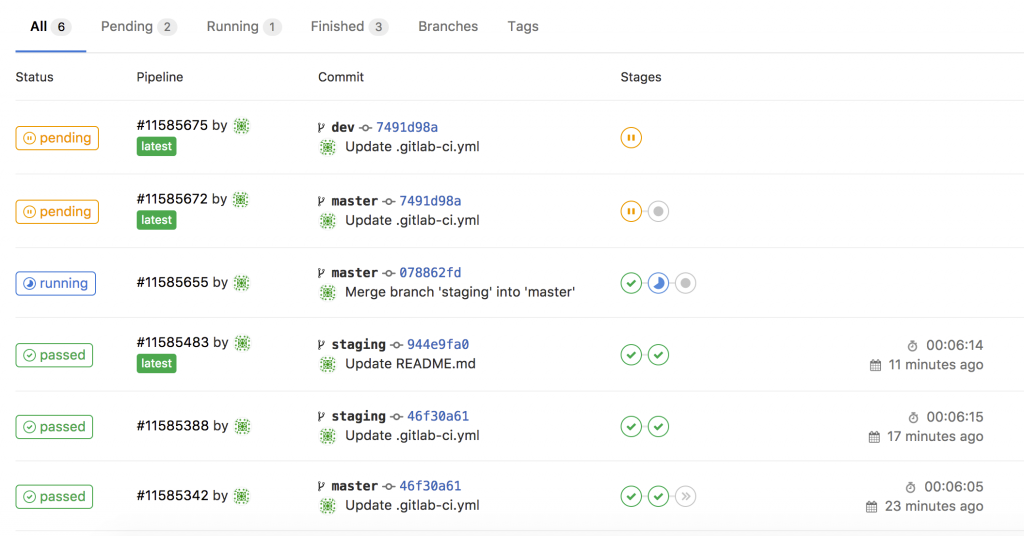

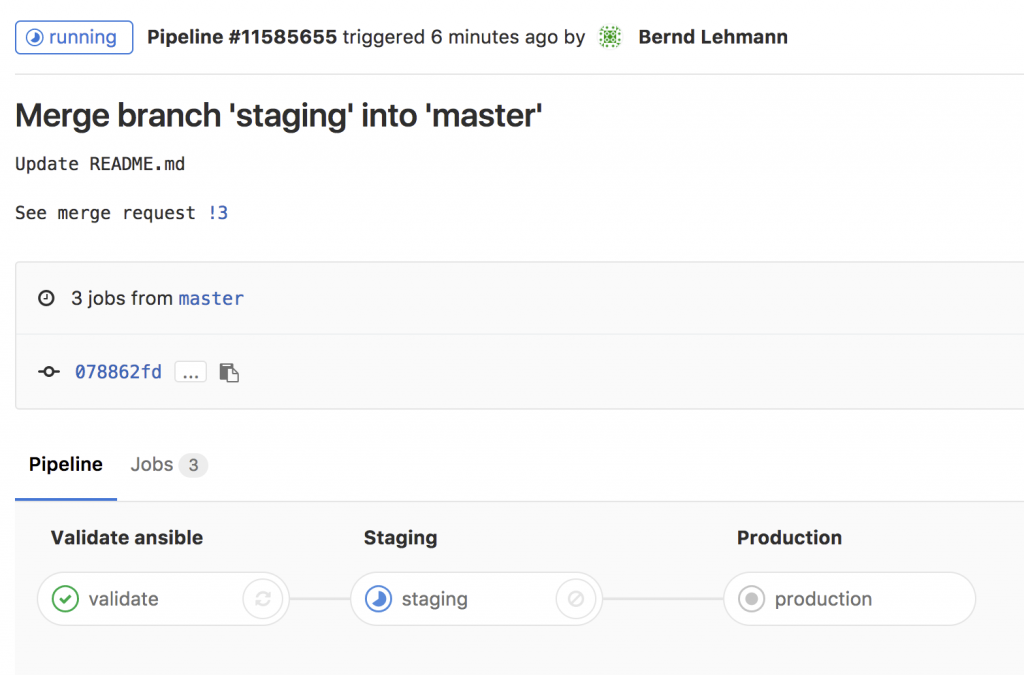

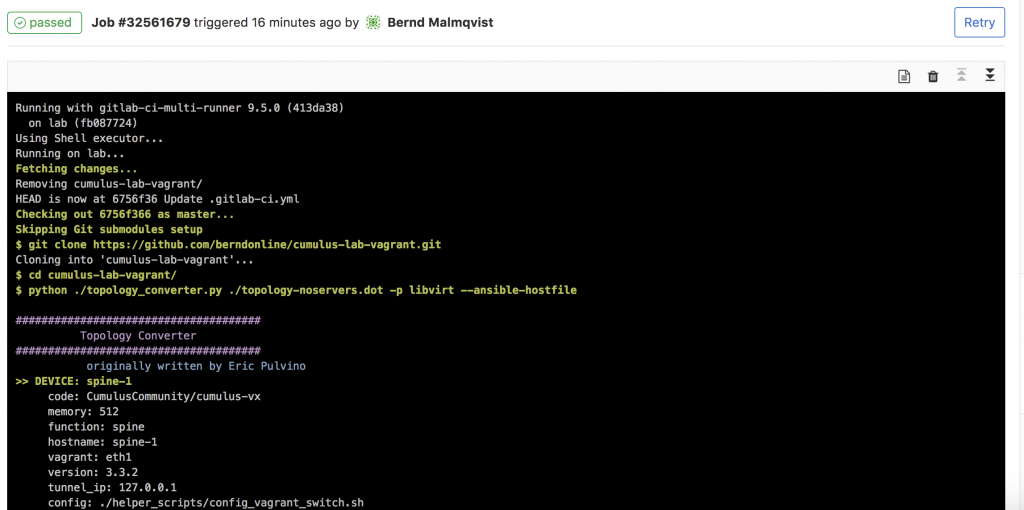

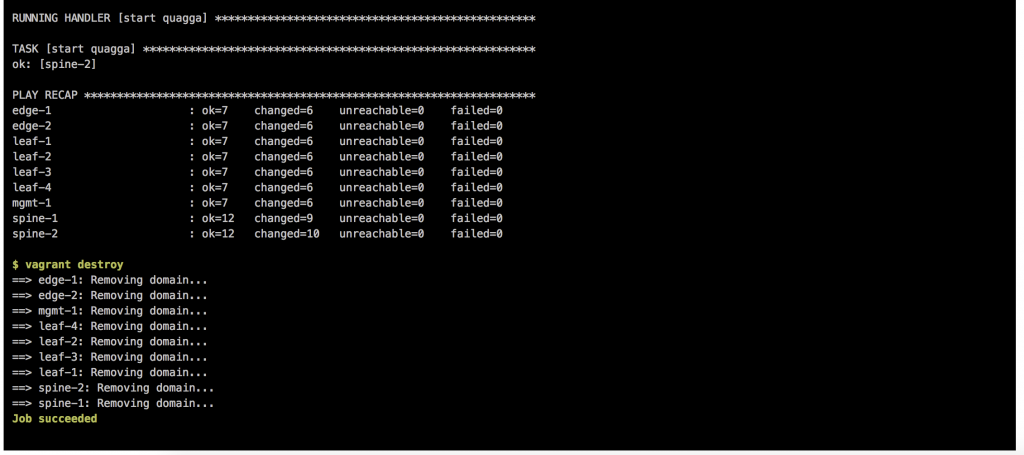

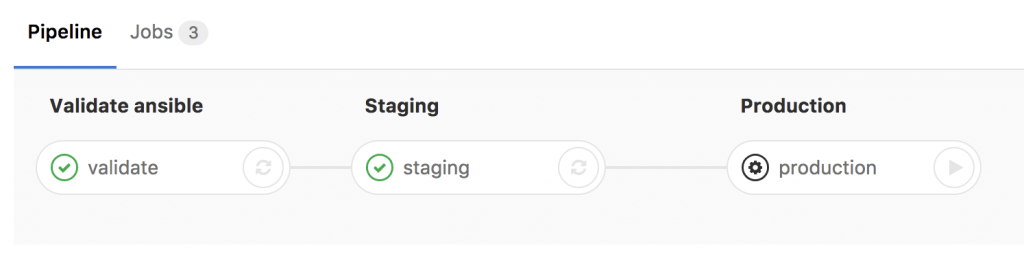

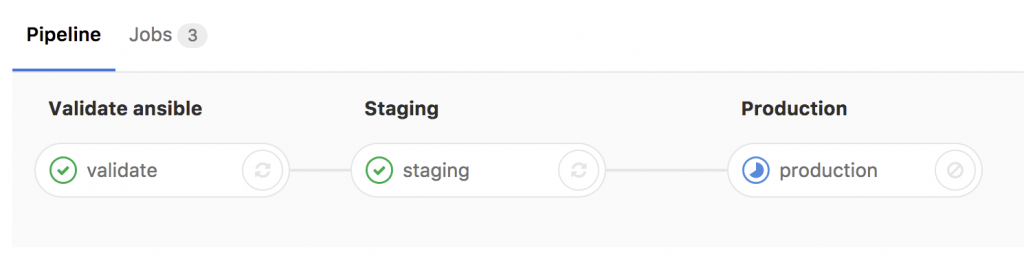

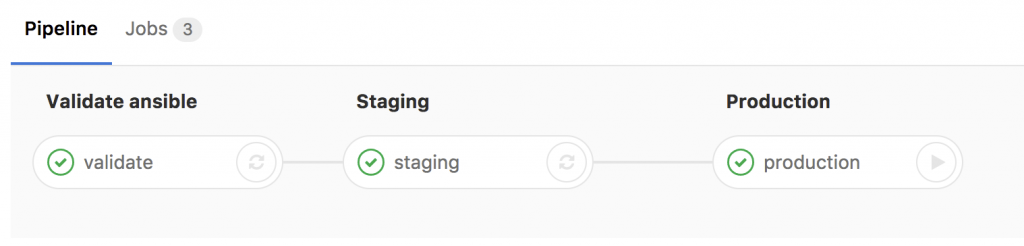

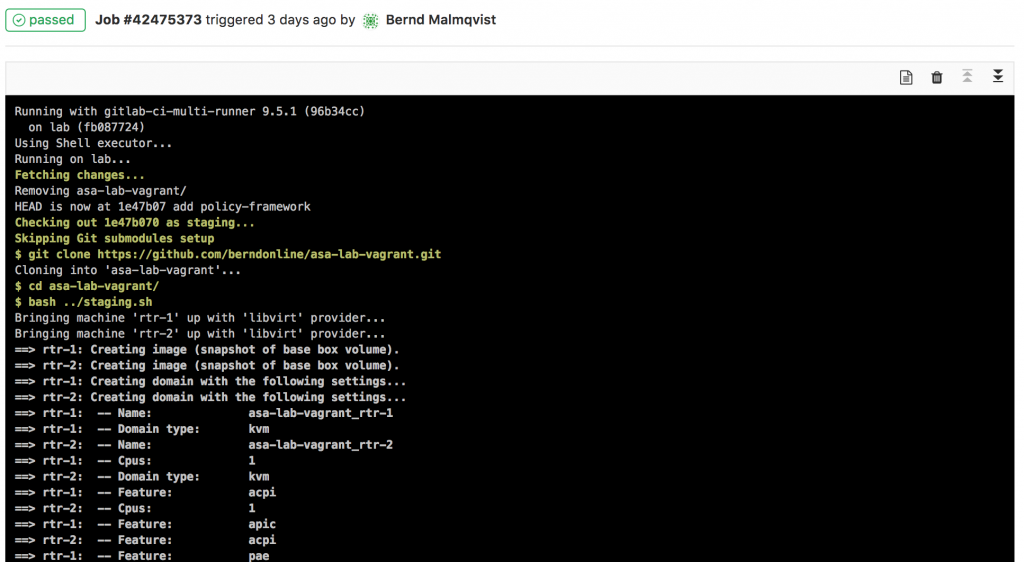

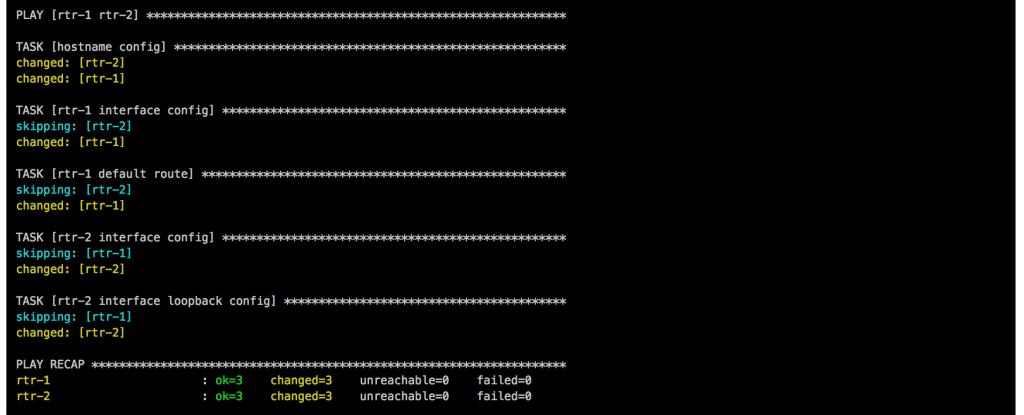

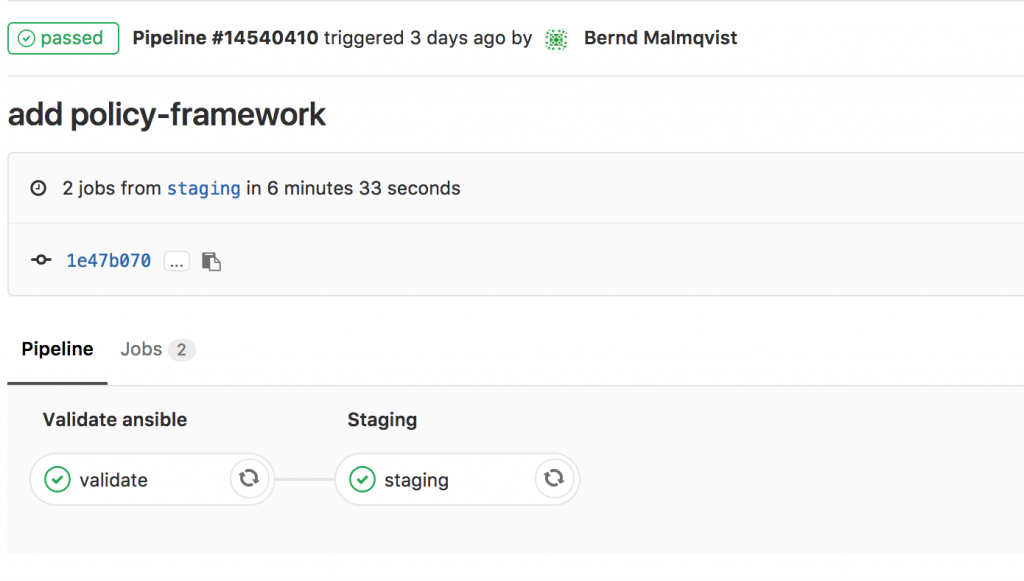

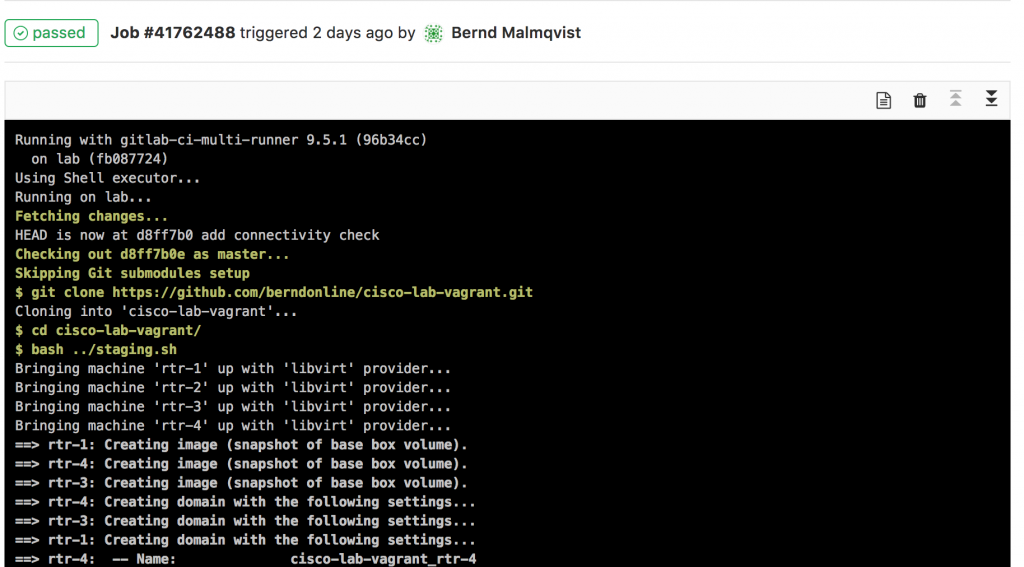

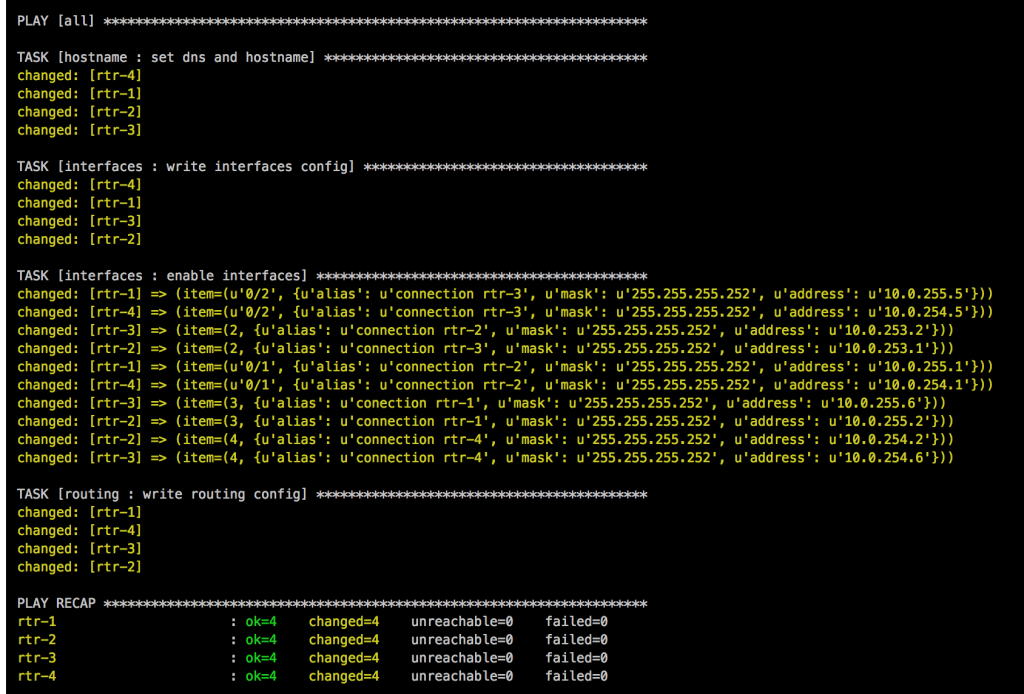

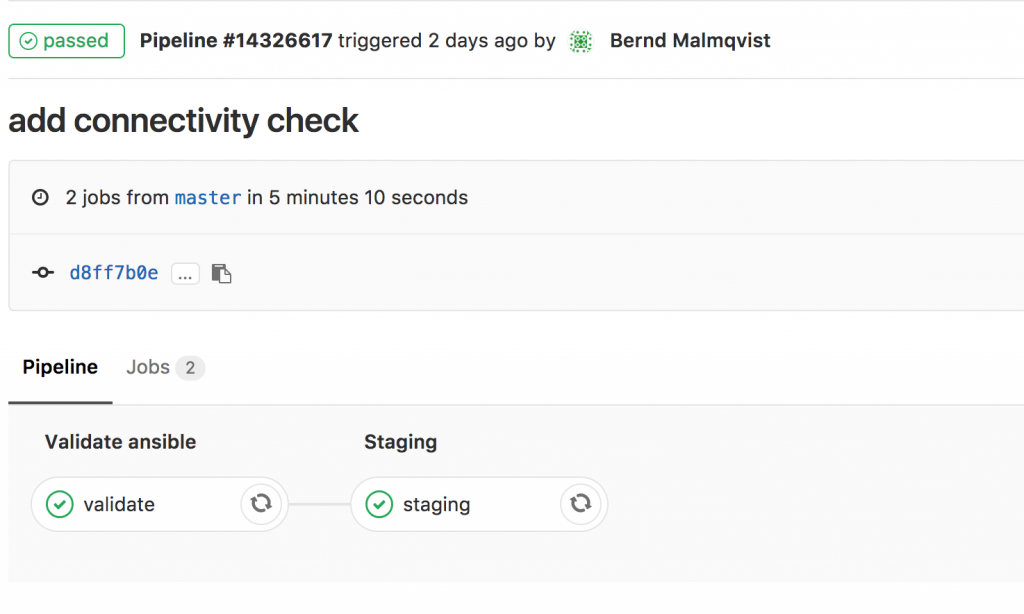

When a change triggers the gitlab-ci pipeline it spins up the Vagrant instances and executes the main Ansible Playbook. After the Vagrant instances are booted, first the two router rtr-1 and rtr-2 need to be configured with cisco_router_config.yml, then afterwards the main site.yml will be run.

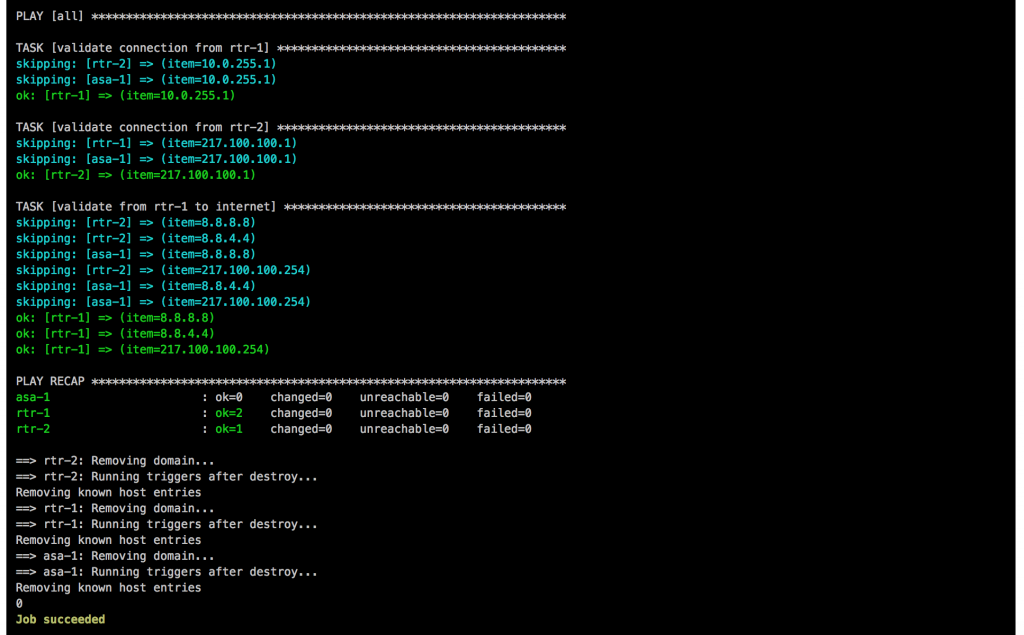

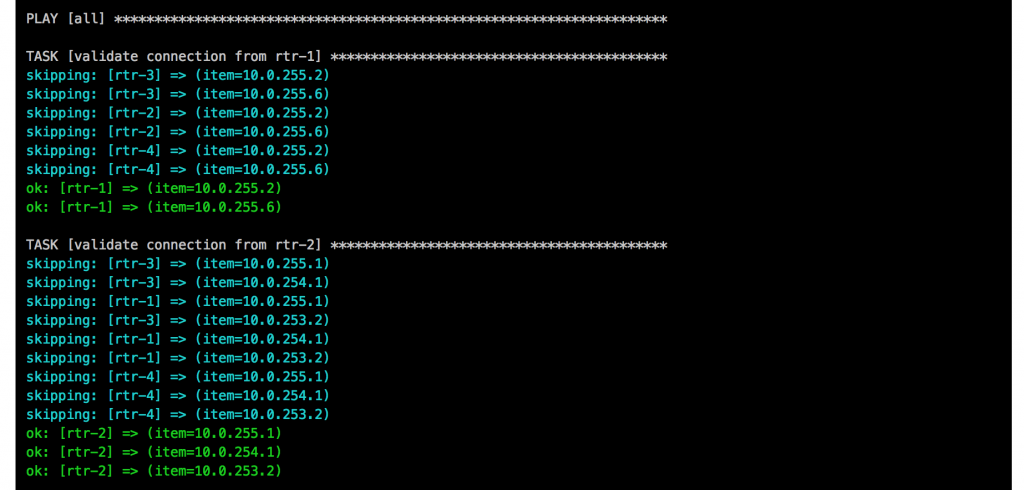

Once the main playbook finishes for the Cisco ASA a last connectivity check will be execute using the playbook asa_check_icmp.yml. Just a simple ping to see if the base configuration is applied correctly.

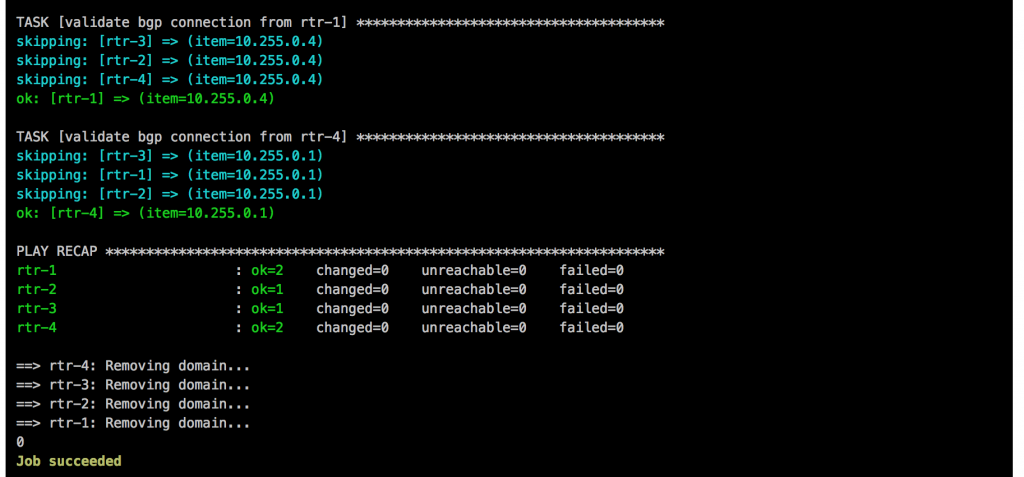

If everything goes well, like in this example, the job is successful:

I will continue to improve the Playbook and the CICD pipeline so come back later to check it out.

I will continue to improve the Playbook and the CICD pipeline so come back later to check it out.

I will continue to improve the Playbook and the CICD pipeline so come back later to check it out.