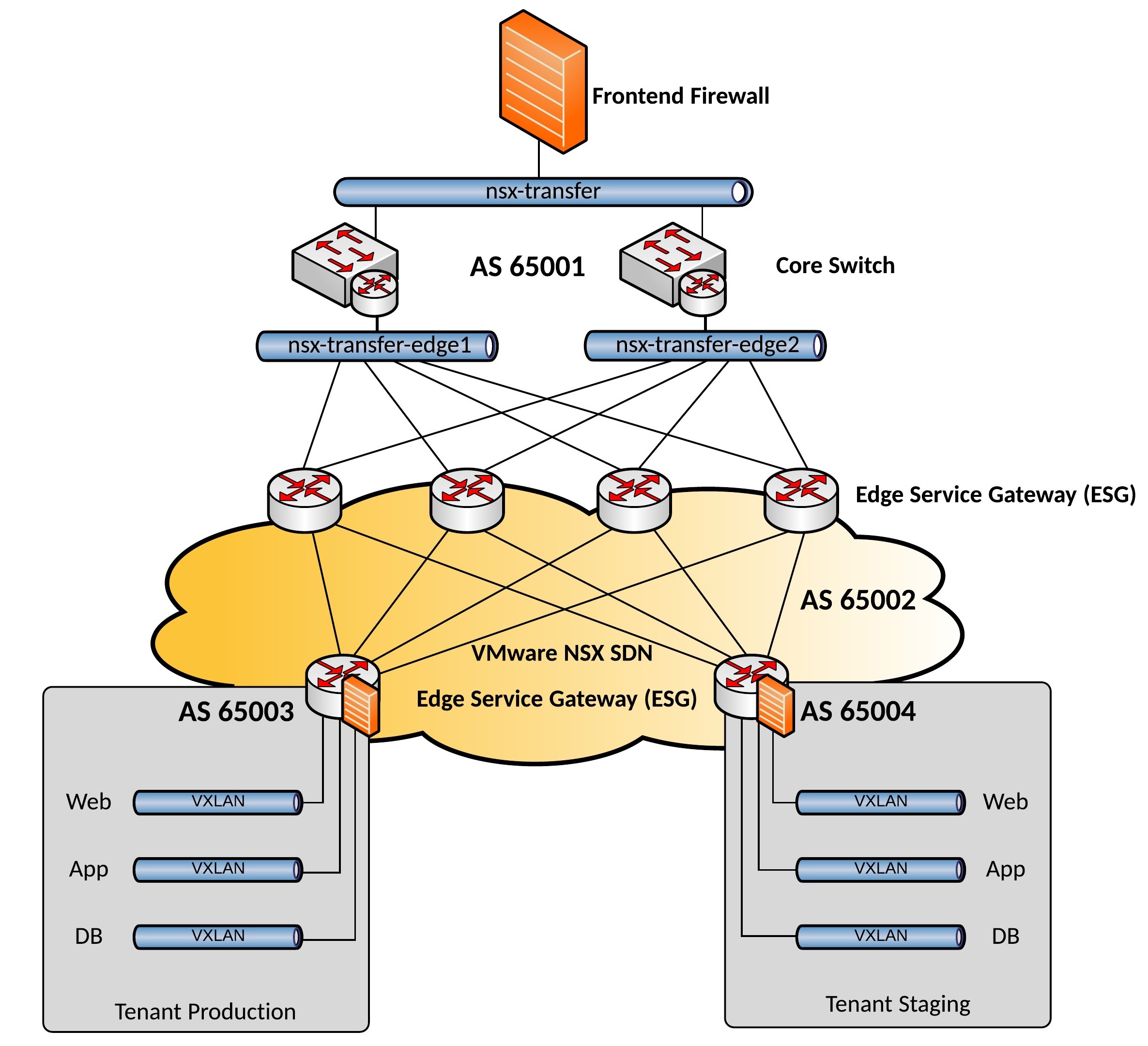

In my previous post about the VMware NSX Edge Routing, I explained how the Edge Service Gateways are connected to the physical network.

Now I want to show an example how the network design could look like if you want to use NSX:

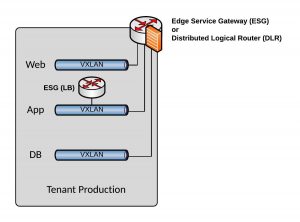

Of course this really depends on your requirements and how complex your network is, I could easily replace the Tenant Edge Service Gateways (ESG) with Distributed Logical Router (DLR) if your tenant network is more complex. The advantage with the ESGs is that I could easily enable Load Balancing as a Service to balance traffic between servers in my tier-3 networks.

The ESGs using Load Balancing as a Services can we as well deploy on-a-stick but for this you need to use SNAT and X-Forwarded-For:

Very interesting it gets when you start using the Distributed Firewall and filter traffic between servers in the same network, micro-segmentation of your virtual machines within the same subnet. In combination with Security Tags this can be a very powerful way of securing your networks.

About what VMware NSX can do, I can only recommend reading the VMware NSX reference design guide, you find lot of useful information how to configure NSX.

Comment below if you have questions.