This article is about OpenShift networking in general but I also want to look at the Kubernetes CNI feature NetworkPolicy in a bit more detail. The latest OpenShift version 3.11 comes with three SDN deployment models:

- ovs-subnet – This creates a single large vxlan between all the namespace and everyone is able to talk to each other.

- ovs-multitenant – As the name already says this separates the namespaces into separate vxlan’s and only resources within the namespace are able to talk to each other. You have the possibility to join or making namespaces global.

- ovs-networkpolicy – The newest SDN deployment method for OpenShift to enabling micro-segmentation to control the communication between pods and namespaces.

- ovs-ovn – Next generation SDN for OpenShift but not yet officially released for OpenShift. For more information visit the OpenvSwitch Github repository ovn-kubernetes.

Here an overview of the common ovs-multitenant software defined network:

On an OpenShift node the tun0 interfaces owns the default gateway and is forwarding traffic to external endpoints outside the OpenShift platform or routing internal traffic to the openvswitch overlay. Both openvswitch and iptables are central components which are very important for the networking on the platform.

Read the official OpenShift documentation managing networking or configuring the SDN for more information.

NetworkPolicy in Action

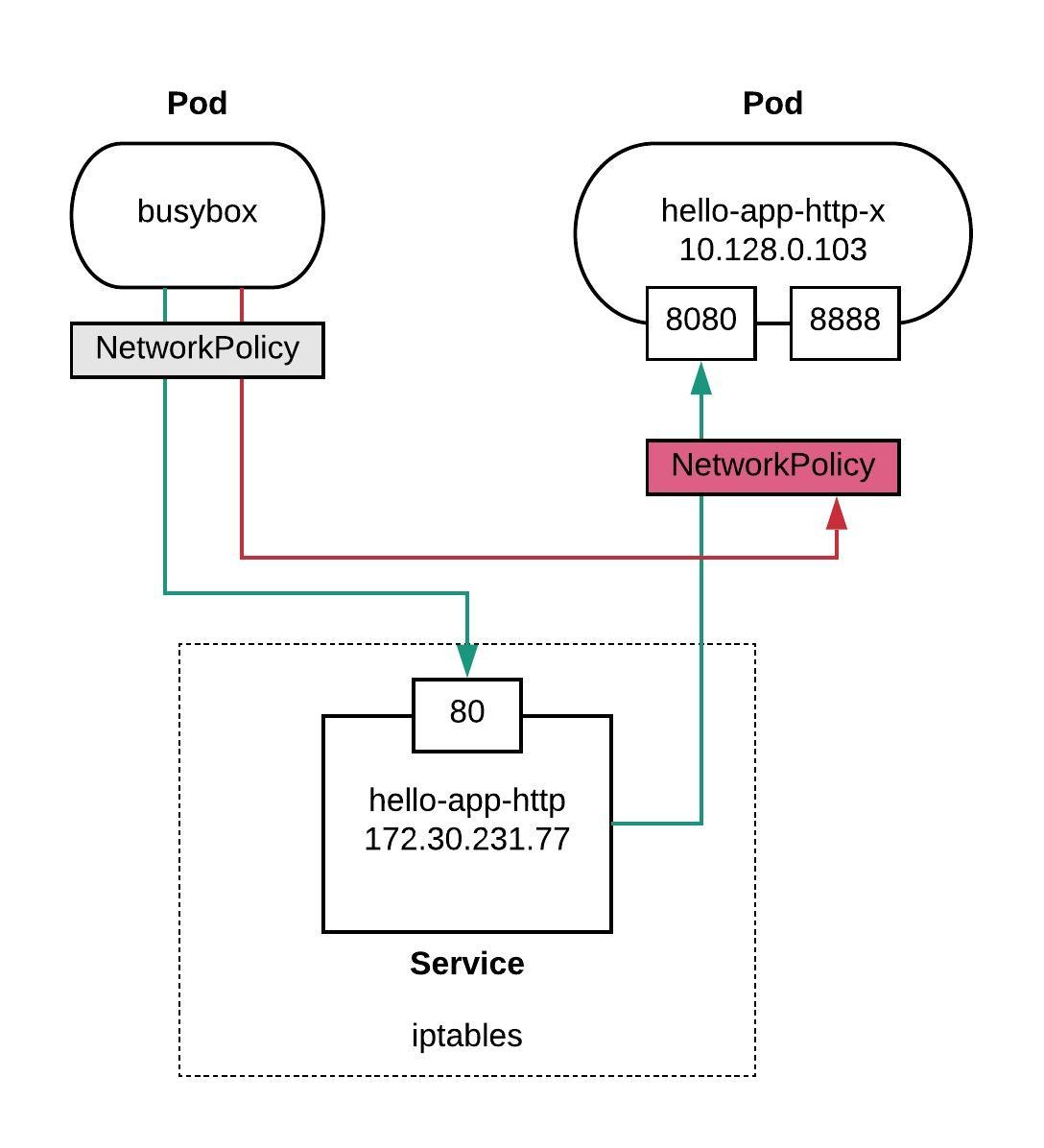

Let me first explain the example I use to test NetworkPolicy. We will have one hello-openshift pod behind service, and a busybox pod for testing the internal communication. I will create a default ingress deny policy and specifically allow tcp port 8080 to my hello-openshift pod. I am not planning to restrict the busybox pod with an egress policy, so all egress traffic is allowed.

Here you find the example yaml files to replicate the layout: busybox.yml and hello-openshift.yml

Short recap about Kubernetes service definition, they are just simple iptables entries and for this reason you cannot restrict them with NetworkPolicy.

[root@master1 ~]# iptables-save | grep 172.30.231.77 -A KUBE-SERVICES ! -s 10.128.0.0/14 -d 172.30.231.77/32 -p tcp -m comment --comment "myproject/hello-app-http:web cluster IP" -m tcp --dport 80 -j KUBE-MARK-MASQ -A KUBE-SERVICES -d 172.30.231.77/32 -p tcp -m comment --comment "myproject/hello-app-http:web cluster IP" -m tcp --dport 80 -j KUBE-SVC-LFWXBQW674LJXLPD [root@master1 ~]#

When you install OpenShift with ovs-networkpolicy, the default policy allows all traffic within a namespace. Let’s do a first test without a custom NetworkPolicy rule to see if I am able to connect to my hello-app-http service.

[root@master1 ~]# oc exec busybox-1-wn592 -- wget -S --spider http://hello-app-http Connecting to hello-app-http (172.30.231.77:80) HTTP/1.1 200 OK Date: Tue, 19 Feb 2019 13:59:04 GMT Content-Length: 17 Content-Type: text/plain; charset=utf-8 Connection: close [root@master1 ~]#

Now we add a default ingress deny policy to the namespace:

kind: NetworkPolicy apiVersion: networking.k8s.io/v1 metadata: name: deny-all-ingress spec: podSelector: ingress: []

After applying the default deny policy you are not able to connect to the hello-app-http service. The connection is timing out because no flows entries are defined yet in the OpenFlow table:

[root@master1 ~]# oc exec busybox-1-wn592 -- wget -S --spider http://hello-app-http Connecting to hello-app-http (172.30.231.77:80) wget: can't connect to remote host (172.30.231.77): Connection timed out command terminated with exit code 1 [root@master1 ~]#

Let’s add a new policy and allow tcp port 8080 and specifying a podSelector to match all pods with the label “role: web”.

kind: NetworkPolicy

apiVersion: networking.k8s.io/v1

metadata:

name: allow-tcp8080

spec:

podSelector:

matchLabels:

role: web

ingress:

- ports:

- protocol: TCP

port: 8080

This alone doesn’t do anything, you still need to patch the deployment config and add the label “role: web” to your deployment config metadata information.

oc patch dc/hello-app-http --patch '{"spec":{"template":{"metadata":{"labels":{"role":"web"}}}}}'

To rollback the previous changes simply use the ‘oc rollback dc/hello-app-http’ command.

Now let’s check the openvswitch flow table and you will see that a new flow got added with the destination of my hello-openshift pod 10.128.0.103 on port 8080.

Afterwards we try again to connect to my hello-app-http service and you see that we get a succesful connect:

[root@master1 ~]# oc exec ovs-q4p8m -n openshift-sdn -- ovs-ofctl -O OpenFlow13 dump-flows br0 | grep '10.128.0.103.*8080' cookie=0x0, duration=221.251s, table=80, n_packets=15, n_bytes=1245, priority=150,tcp,reg1=0x2dfc74,nw_dst=10.128.0.103,tp_dst=8080 actions=output:NXM_NX_REG2[] [root@master1 ~]#

[root@master1 ~]# oc exec busybox-1-wn592 -- wget -S --spider http://hello-app-http Connecting to hello-app-http (172.30.231.77:80) HTTP/1.1 200 OK Date: Tue, 19 Feb 2019 14:21:57 GMT Content-Length: 17 Content-Type: text/plain; charset=utf-8 Connection: close [root@master1 ~]#

The hello openshift container publishes two tcp ports 8080 and 8888, so finally let’s try to connect to the pod IP address on port 8888, and we will find out that I am not able to connect, the reason is that I only allowed 8080 in the policy.

[root@master1 ~]# oc exec busybox-1-wn592 -- wget -S --spider http://10.128.0.103:8888 Connecting to 10.128.0.103:8888 (10.128.0.103:8888) wget: can't connect to remote host (10.128.0.103): Connection timed out command terminated with exit code 1 [root@master1 ~]#

There are great posts on the RedHat OpenShift blog which you should checkout networkpolicies-and-microsegmentation and openshift-and-network-security-zones-coexistence-approaches. Otherwise I can recommend having a look at Ahmet Alp Balkan Github repository about Kubernetes network policy recipes, where you can find some good examples.