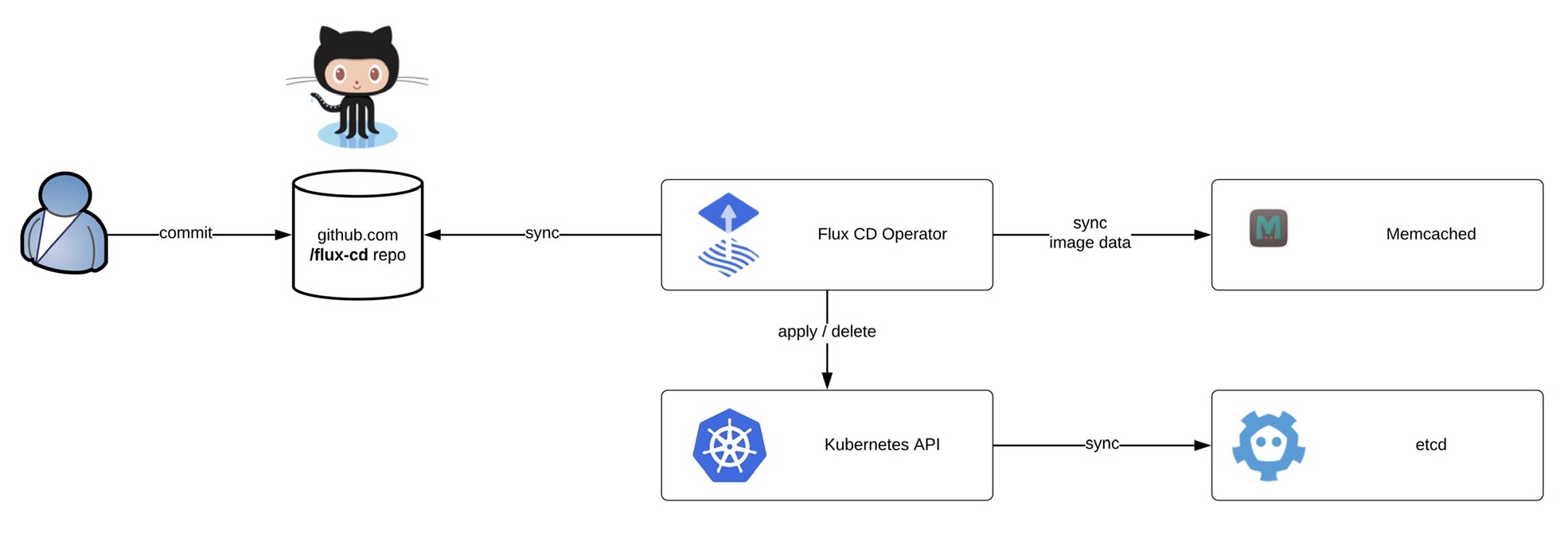

Kubernetes is becoming more and more popular, and so is managing clusters at scale. This article is about how to manage Kubernetes clusters the GitOps way using the Flux CD operator.

Flux can monitor container image and code repositories that you specify and trigger deployments to automatically change the configuration state of your Kubernetes cluster. The cluster configuration is centrally managed and stored in declarative form in Git, and there is no need for an administrator to manually apply manifests, the Flux operator synchronise to apply or delete the cluster configuration.

Before we start deploying the operator we need to install the fluxctl command-line utility and create the namespace:

Before we start deploying the operator we need to install the fluxctl command-line utility and create the namespace:

sudo wget -O /usr/local/bin/fluxctl https://github.com/fluxcd/flux/releases/download/1.18.0/fluxctl_linux_amd64 sudo chmod 755 /usr/local/bin/fluxctl kubectl create ns flux

Deploying the Flux operator is straight forward and requires a few options like git repository and git path. The path is important for my example because it tells the operator in which folder to look for manifests:

$ fluxctl install [email protected] [email protected]:berndonline/flux-cd.git --git-path=clusters/gke,common/stage --manifest-generation=true --git-branch=master --namespace=flux --registry-disable-scanning | kubectl apply -f - deployment.apps/memcached created service/memcached created serviceaccount/flux created clusterrole.rbac.authorization.k8s.io/flux created clusterrolebinding.rbac.authorization.k8s.io/flux created deployment.apps/flux created secret/flux-git-deploy created

After you have applied the configuration, wait until the Flux pods are up and running:

$ kubectl get pods -n flux NAME READY STATUS RESTARTS AGE flux-85cd9cd746-hnb4f 1/1 Running 0 74m memcached-5dcd7579-d6vwh 1/1 Running 0 20h

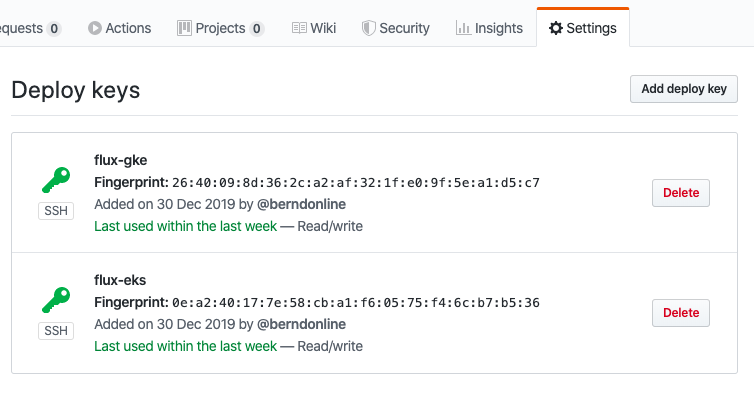

The last step is to get the Flux operator deploy keys and copy the output to add to your Git repository:

fluxctl identity --k8s-fwd-ns flux

Now you are ready to synchronise the Flux operator with the repository. By default Flux automatically synchronises every 5 minutes to apply configuration changes:

Now you are ready to synchronise the Flux operator with the repository. By default Flux automatically synchronises every 5 minutes to apply configuration changes:

$ fluxctl sync --k8s-fwd-ns flux Synchronizing with [email protected]:berndonline/flux-cd.git Revision of master to apply is 726944d Waiting for 726944d to be applied ... Done.

You are able to list workloads which are managed by the Flux operator:

$ fluxctl list-workloads --k8s-fwd-ns=flux -a WORKLOAD CONTAINER IMAGE RELEASE POLICY default:deployment/hello-kubernetes hello-kubernetes paulbouwer/hello-kubernetes:1.5 ready automated

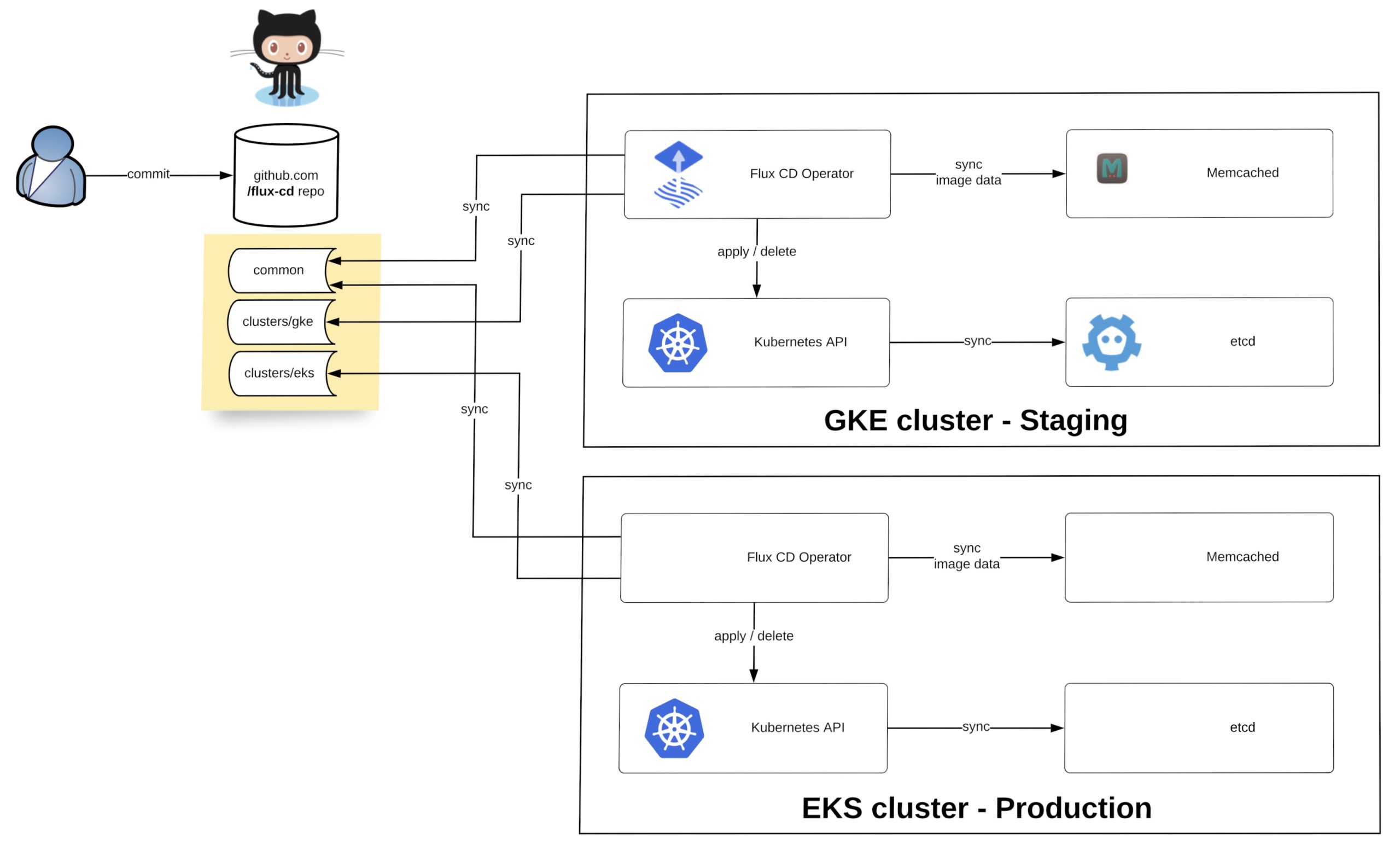

How do we manage the configuration for multiple Kubernetes clusters?

I want to show you a simple example using Kustomize to manage multiple clusters across two environments (staging and production) with Flux. Basically you have a single repository and multiple clusters synchronising the configuration depending how you configure the –git-path variable of the Flux operator. The option –manifest-generation enables Kustomize for the operator and it is required to add a .flux.yaml to run Kustomize build on the cluster directories and to apply the generated manifests.

Let’s look at the repository file and folder structure. We have the base folder containing the common deployment configuration, the common folder with the environment separation for stage and prod overlays and the clusters folder which contains more cluster specific configuration:

├── .flux.yaml

├── base

│ └── common

│ ├── deployment.yaml

│ ├── kustomization.yaml

│ ├── namespace.yaml

│ └── service.yaml

├── clusters

│ ├── eks

| | ├── eks-app1

│ │ | ├── deployment.yaml

| | | ├── kustomization.yaml

│ │ | └── service.yaml

| | └── kustomization.yaml

│ ├── gke

| | ├── gke-app1

│ │ | ├── deployment.yaml

| | | ├── kustomization.yaml

│ │ | └── service.yaml

| | ├── gke-app2

│ │ | ├── deployment.yaml

| | | ├── kustomization.yaml

│ │ | └── service.yaml

| | └── kustomization.yaml

└── common

├── prod

| ├── prod.yaml

| └── kustomization.yaml

└── stage

├── team1

| ├── deployment.yaml

| ├── kustomization.yaml

| ├── namespace.yaml

| └── service.yaml

├── stage.yaml

└── kustomization.yaml

If you are new to Kustomize I would recommend reading the article Kustomize – The right way to do templating in Kubernetes.

The last thing we need to do is to deploy the Flux operator to the two Kubernetes clusters. The only difference between both is the git-path variable which points the operator to the cluster and common directories were Kustomize applies the overlays based what is specified in kustomize.yaml. More details about the configuration you find in my example repository: https://github.com/berndonline/flux-cd

Flux config for Google GKE staging cluster:

fluxctl install [email protected] [email protected]:berndonline/flux-cd.git --git-path=clusters/gke,common/stage --manifest-generation=true --git-branch=master --namespace=flux | kubectl apply -f -

Flux config for Amazon EKS production cluster:

fluxctl install [email protected] [email protected]:berndonline/flux-cd.git --git-path=clusters/eks,common/prod --manifest-generation=true --git-branch=master --namespace=flux | kubectl apply -f -

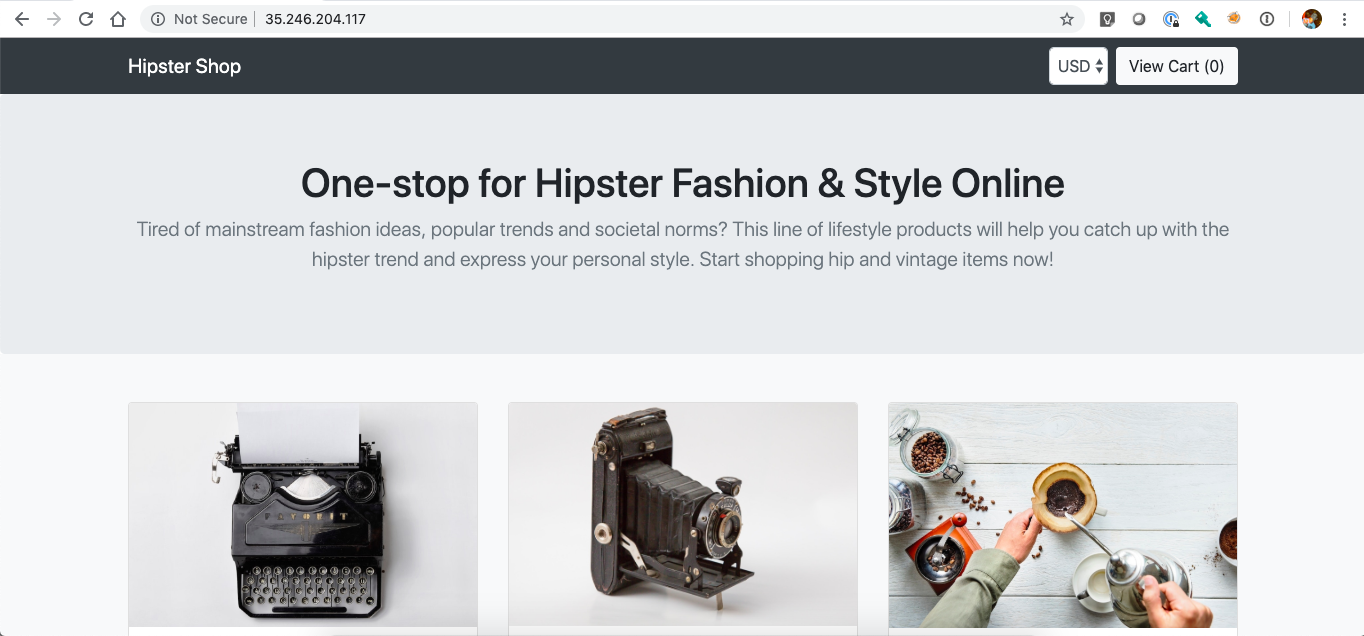

After a few minutes the configuration is applied to the two clusters and you can validate the configuration.

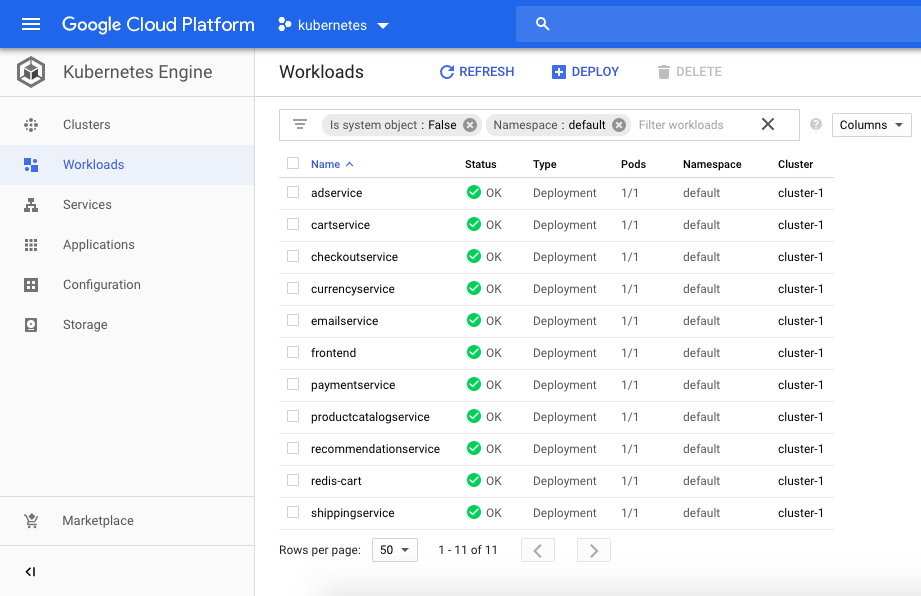

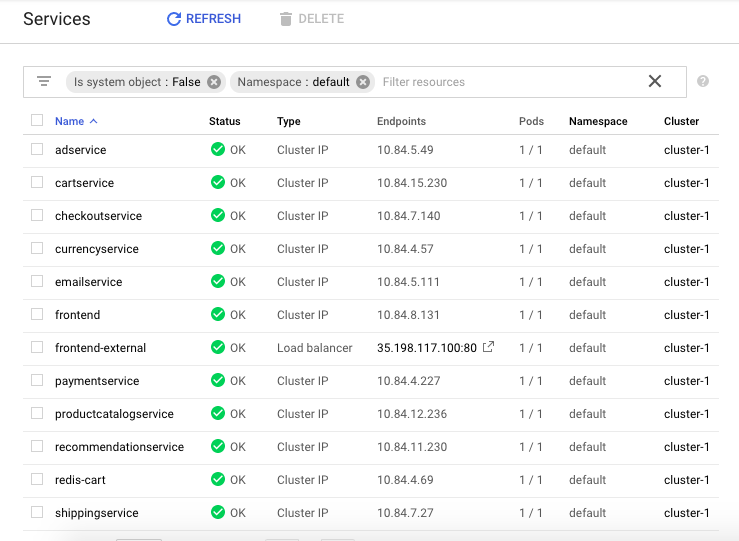

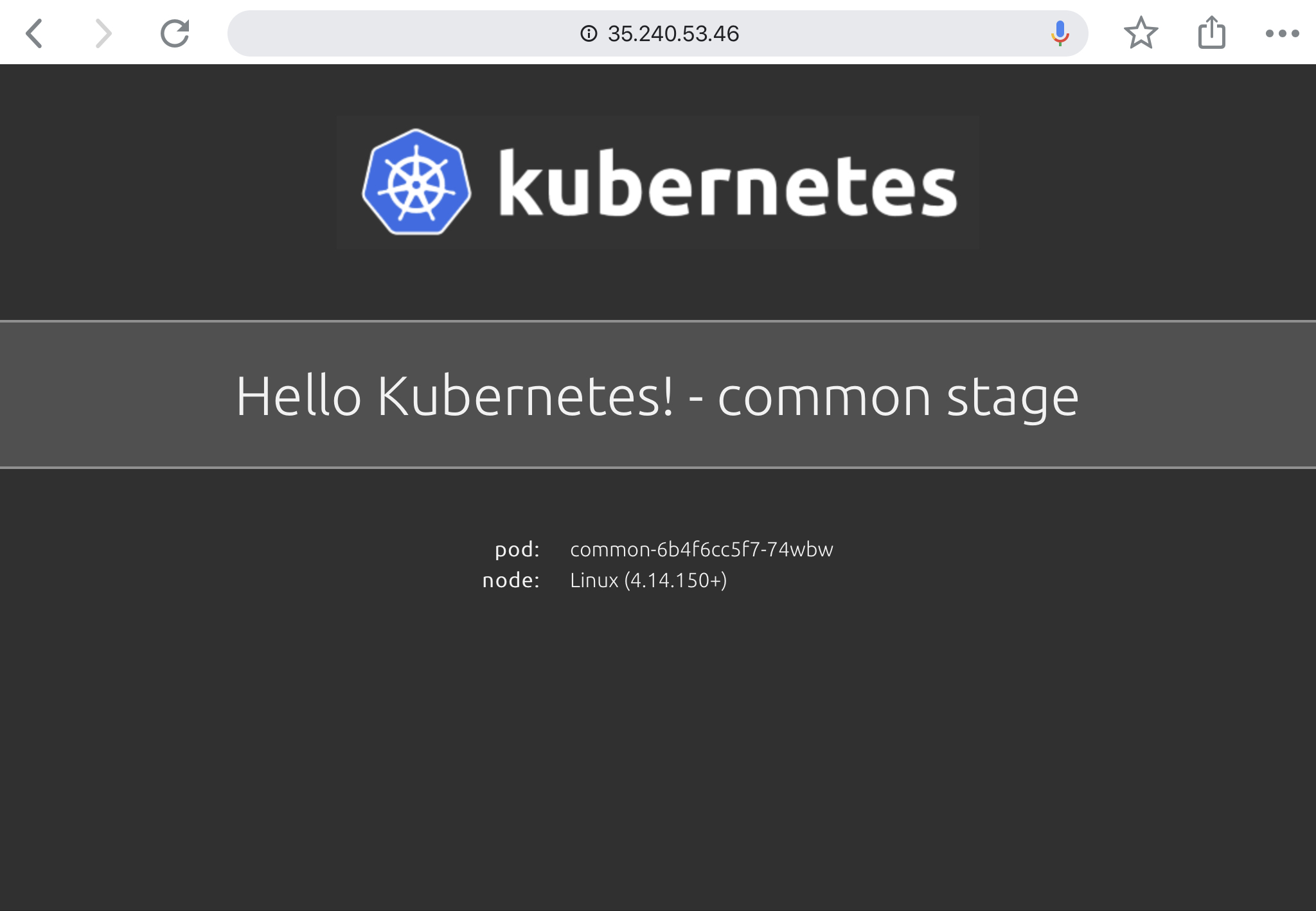

Google GKE stage workloads:

$ fluxctl list-workloads --k8s-fwd-ns=flux -a WORKLOAD CONTAINER IMAGE RELEASE POLICY common:deployment/common hello-kubernetes paulbouwer/hello-kubernetes:1.5 ready automated default:deployment/gke1 hello-kubernetes paulbouwer/hello-kubernetes:1.5 ready default:deployment/gke2 hello-kubernetes paulbouwer/hello-kubernetes:1.5 ready team1:deployment/team1 hello-kubernetes paulbouwer/hello-kubernetes:1.5 ready

$ kubectl get svc --all-namespaces | grep LoadBalancer common common LoadBalancer 10.91.14.186 35.240.53.46 80:31537/TCP 16d default gke1 LoadBalancer 10.91.7.169 35.195.241.46 80:30218/TCP 16d default gke2 LoadBalancer 10.91.10.239 35.195.144.68 80:32589/TCP 16d team1 team1 LoadBalancer 10.91.1.178 104.199.107.56 80:31049/TCP 16d

GKE common stage application:

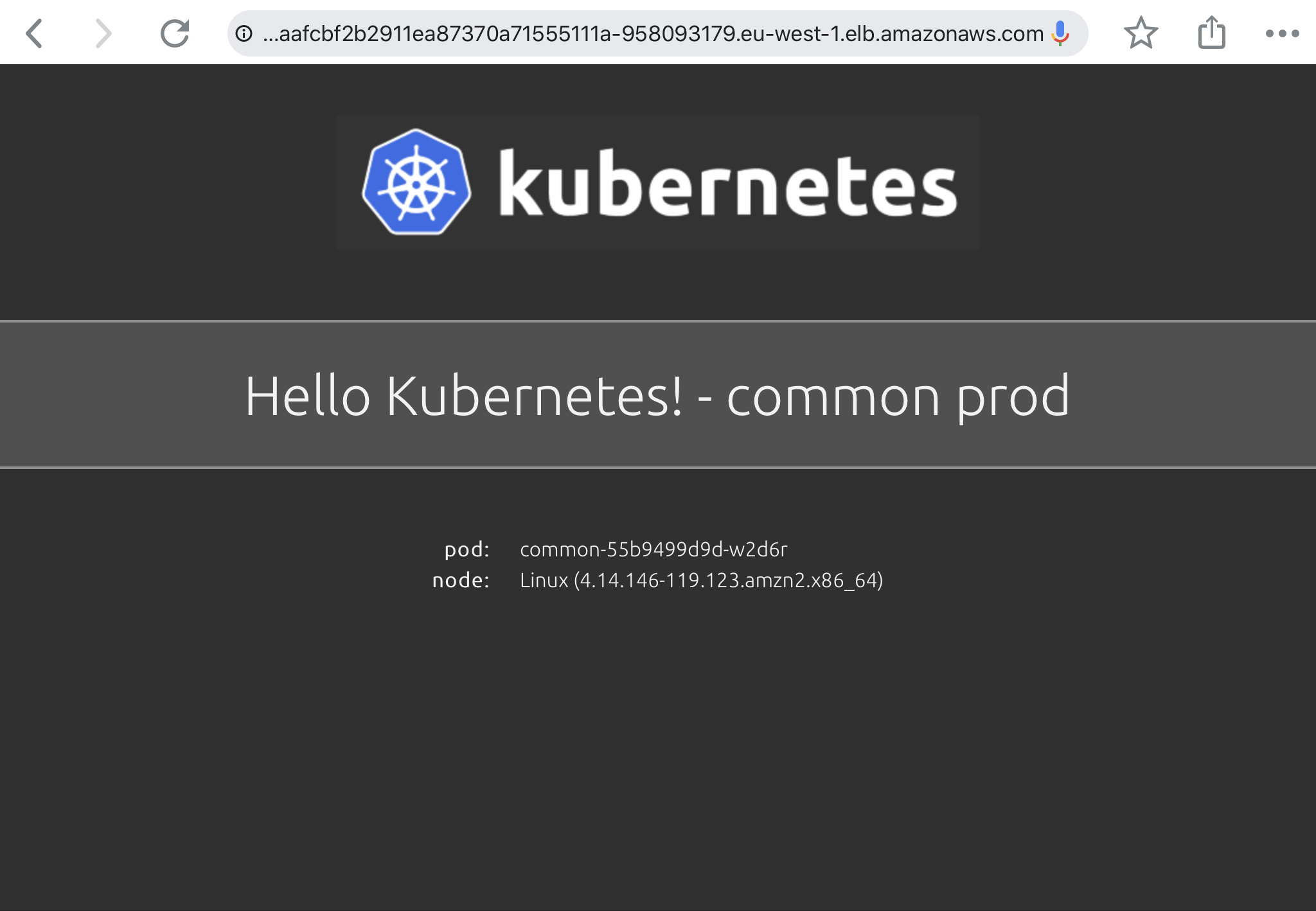

Amazon EKS prod workloads:

$ fluxctl list-workloads --k8s-fwd-ns=flux -a WORKLOAD CONTAINER IMAGE RELEASE POLICY common:deployment/common hello-kubernetes paulbouwer/hello-kubernetes:1.5 ready automated default:deployment/eks1 hello-kubernetes paulbouwer/hello-kubernetes:1.5 ready

$ kubectl get svc --all-namespaces | grep LoadBalancer common common LoadBalancer 10.100.254.171 a4caafcbf2b2911ea87370a71555111a-958093179.eu-west-1.elb.amazonaws.com 80:32318/TCP 3m8s default eks1 LoadBalancer 10.100.170.10 a4caeada52b2911ea87370a71555111a-1261318311.eu-west-1.elb.amazonaws.com 80:32618/TCP 3m8s

EKS common prod application:

I hope this article is useful to get started with GitOps and the Flux operator. In the future, I would like to see Flux being able to watch git tags which will make it easier to promote changes and manage clusters with version tags.

For more technical information have a look at the Flux CD documentation.